APIOps: End-to-End Automation Throughout the API Lifecycle

It is a truth universally acknowledged that the culture change side of any technology transformation program is the hardest and slowest part to get right. If you cannot efficiently operationalize a technology investment, that investment is wasted. This is no different in the world of APIs and microservices, where every service is designed to support a change to a digital-first culture. APIOps makes this change possible.

Want to learn more about the nuts and bolts of APIOps? Download our eBook, Unlocking the Full Potential of your APIs with APIOps, and learn about the stages of APIOps, get an understanding of the technical assets required, and explore the tooling needed to transform API development and management.

What is APIOps?

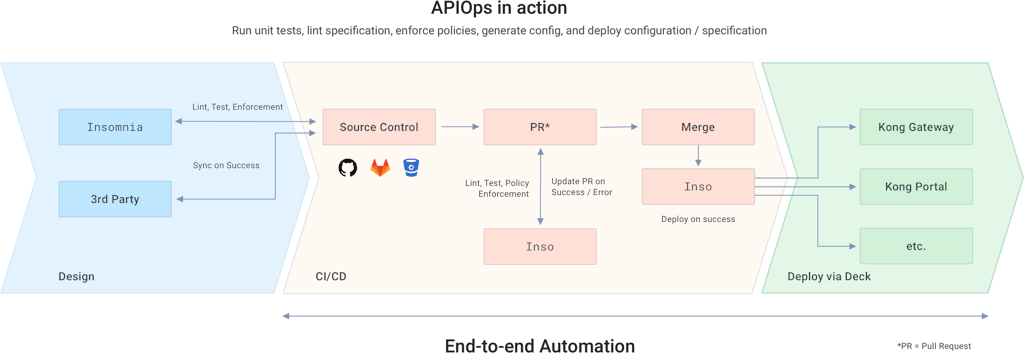

The concept of APIOps is quite simple: We're applying the solid and proven principles of DevOps and GitOps to the API and microservice lifecycle.

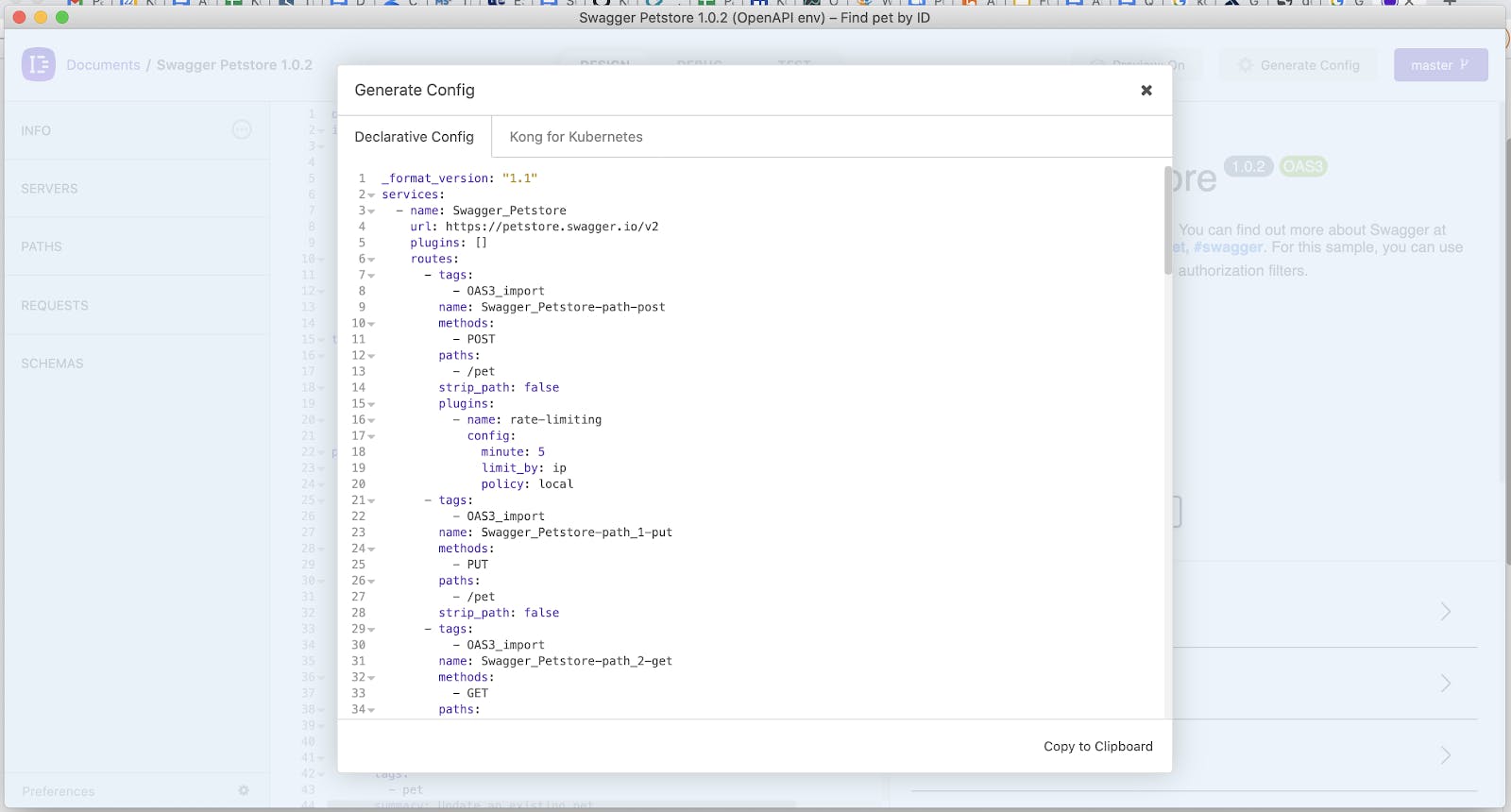

An OpenAPI or specification contract, created at design time, defines the service interface itself; the implementation logic, built following the contract in whatever framework(s) you want to use, defines the way the service works; and declarative configuration, generated from the contract, defines how the service sits in your ecosystem. It is a versioned set of instructions that describes the deployed state of the service throughout its lifespan, including which security and governance policies are applied to which endpoints and methods, and can be automatically generated at any stage of the lifecycle.

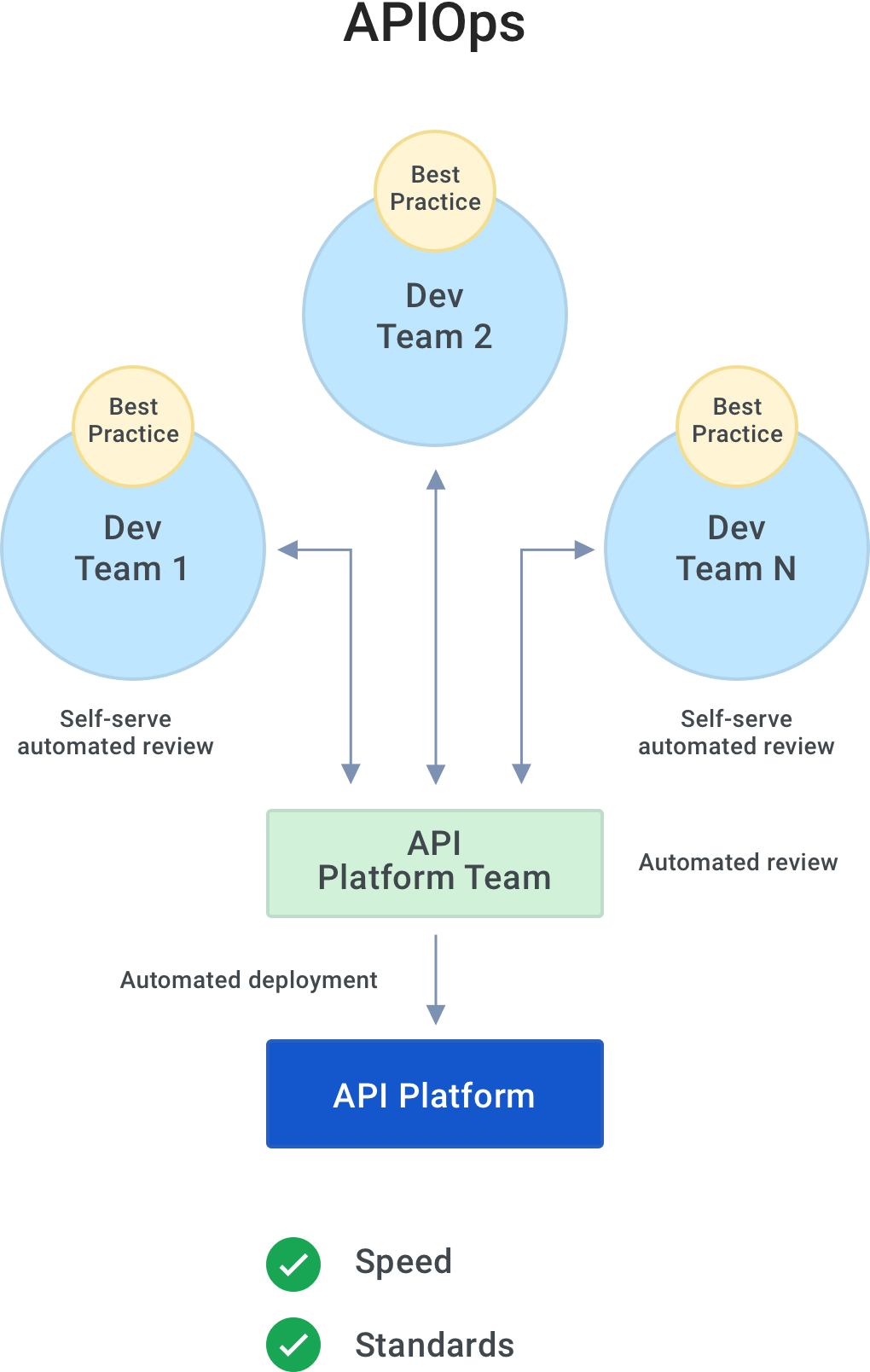

With APIOps, we also equip every user, whether developers, architects or DevOps, with self-serve and automated tools that ensure the quality of the specs and APIs that are being built at every step of the lifecycle. The earlier in the pipeline you can identify deviations from your standards, the faster they are to resolve. The greater the number of services you build following this approach, the greater the consistency between them and the smaller the chance of deploying a service that is of too poor quality to be consumed.

This is how we've set up the service lifecycle in Konnect - with end-to-end automation and tooling to support consistent, continuous delivery of all your connectivity services, regardless of who's building them, the tools they use and the target deployment environment.

APIOps - The Key to API Excellence: Unleash APIs' Full Business Potential

APIOps Increases Speed

Just like with GitOps, APIOps uses declarative config at deploy time to enable completely automated deployments within Konnect. This is not the deployment of the API implementation code but the automated configuration of the Kong runtimes to secure and govern the endpoints in that code according to those instructions.

For example, your declarative config can be generated at the beginning of the deployment process, detecting from the API contract that the GET /customers operation needs to be secured with an OIDC policy and instructing Konnect how to do so. Konnect then applies that policy and configures itself based on those instructions. No need to write your own script to invoke the platform APIs to try and automate this yourself in your CI/CD pipelines.

The declarative config can also be generated and used at design time to enforce known runtime constraints like security policies and for the rapid deployment and testing of APIs in a developer's local Konnect environment whilst designing or building them before progressing through the pipeline.

This means we can achieve:

- Faster, repeatable deployments into our API ecosystem - whether onboarding new developers or teams, instant local deployments for testing or deploying into existing environments - saving us time and getting our services into production more quickly

- Faster time-to-market by catching deviations from standards early on in the pipeline

- Reduced risk through the fully automated application and configuration of security policies

- Easy rollbacks to previous deployment configurations using historical declarative config files from your VCS

APIOps Raises Quality

The second, equally important outcome of following an APIOps approach is a consistent enforcement of standards. This covers both API best practices and adherence to security and governance policies, both of which are necessary to ensure that your services are consumable.

We gain:

- Consistent quality and standards across all APIs and services by embedding automated governance checks and tests into CI pipelines, and using those pipelines for the lifecycle of every service

- Happier and more productive developers who can ensure their own specs meet standards, without having to read any documentation or switch tooling away from the Insomnia API design environment

- Better collaboration and fewer pushbacks and refactoring needed between developers and platform owners because APIs marked as ready for deployment are more likely to follow standards

- Consistency across deployment environments, teams and processes due to the declarative configuration providing a single source of truth for the deployed and managed state of the API

- Smoother deployments as a version controlled declarative configuration file gives us the ability to see changes between configurations to therefore know when something will break

API Lifecycle Management

We need both the right strategy and the right lifecycle to make this happen. In this post, we examine the latter with APIOps - what does your service lifecycle need to look like to support the creation of reliable and high-quality digital experiences across a distributed ecosystem at scale?

The easy answer here is to say "you should build every API for reuse," but the reality is far more complex. Who is "you?" How do you enforce the "should?" What else must we consider along with the actual "build" of APIs? What do we consider an "API" nowadays (hint: we can't just focus on RESTful services anymore)? And what does a service need to look like to actually be "reusable?"

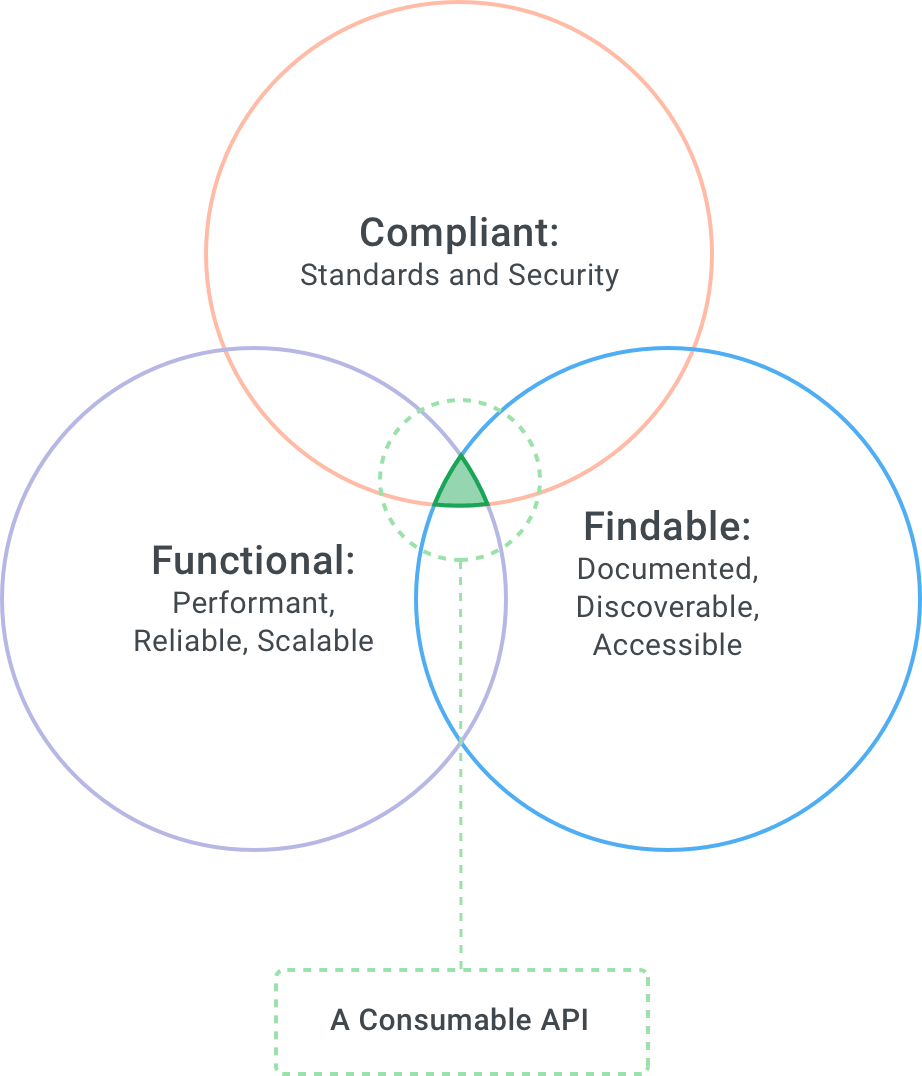

Let's start with that last point first - what does reusable mean? In the early days of API management, it meant sticking your APIs in a themed portal so consumers could find and access them. This is still a necessity but only one of many. Reuse is actually a much more complex equation dependent on a number of factors, from the operating model within your organization to the experience you need to provide to the end user. For services to be reusable, they should be developed with a target audience in mind and meet a business objective.

The culture around those services is often then based on a product mindset, in which we consider each of our services to be products with their own lifecycles, rather than technical entities in the background. For any of those "products" to be reused, which is what brings you operational efficiency and agility, they need to be consumable. This means they are:

- Well-designed, secured and compliant, following the industry and your organization's usage and security best practices

- Documented, discoverable and accessible for your consumers to find and (re)use

- Performant, reliable and scalable so consumers receive a high-quality service from you and have confidence to continue reusing your services

- Well-designed, secured and compliant, following the industry and your organization's usage and security best practices

If any one of these properties is missing, you might still be able to deploy your APIs and services, but they will not deliver value. At best, they will be inconsistent or unreliable and therefore, impossible to use; at worst, they will be the security risk you've accidentally exposed that leads to your turn in the news for a data breach of your customers' private information. The gravity of these consequences in fact means that tech leaders are now prioritizing API and application quality over delivery speed.

Ensuring all of our services carry these properties, and are therefore consumable, is vital for reuse. The way to do this is by operationalizing and automating the service lifecycle with APIOps. However, given the increasingly distributed team and technology setups across most companies, today this typically ends up with a trade-off between the speed and quality of your delivery. As we shall look at later, APIOps means you don't have to do this.

Perhaps you're at the beginning of your microservices journey, or or perhaps this is the fourth API program you’re defining and you already have a lot of experience operating at scale. Ask yourself this: how often (and be honest) have you really seen a design-first approach be followed every time, especially 12+ months down the line once the initial enthusiasm has worn off?

How many times have you seen glorious-sounding API-first roadmaps stutter to a halt? It's easy to get the tech in (especially Kong Konnect), but the biggest challenge we're seeing companies face today is how they can ensure consistency and compliance across distributed services of different types, running in different cloud and on-premise environments, and built, owned and operated by different, distributed teams - especially since all too often the platform guardrails don’t exist to ensure you stay true to the ambition.

Why the Trade-Off?

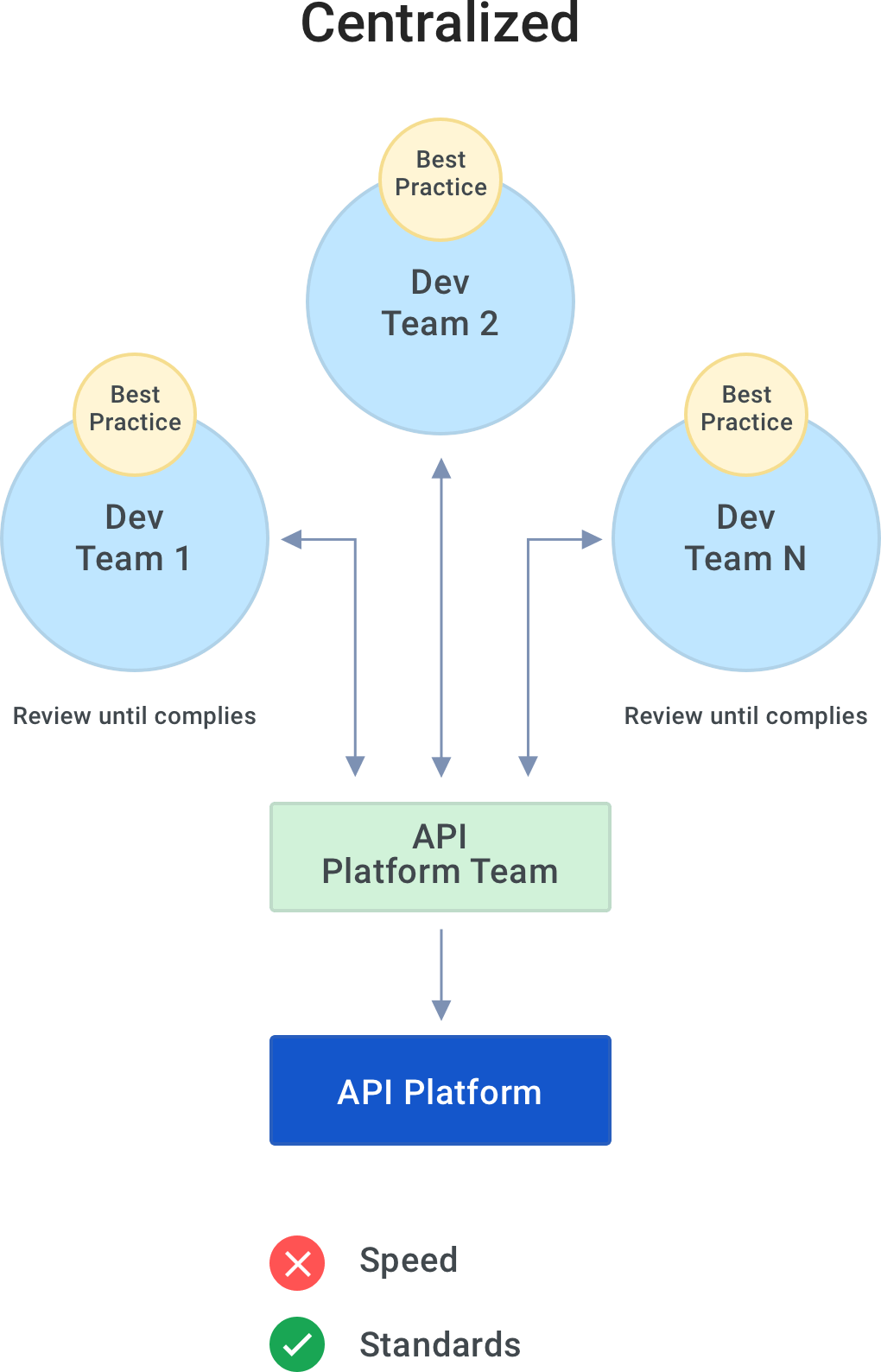

Some organizations - typically those at the very beginning of an API program - give developers free reign to deploy, manage and publish their APIs without quality gates. This allows them to move at speed; however, with distributed development teams following different processes, the end result is an API platform full of inconsistency. Services designed and built in this way will likely overlap and have different (or non-existent) documentation and security - and therefore, they will not be reused.

Most organizations running an API program therefore have some form of an API platform team or a group of governors who review API designs and implementations before they're deployed to make sure that everything follows standards. By its very definition, we've created a bottleneck. Our governors are meant to ensure the overall architecture, infrastructure and capabilities of everything surrounding the API platform are meeting requirements, but in this model, the consistency of services in the API platform - and therefore the consumability and subsequent reuse of them - is entirely dependent on these poor folks spending time manually reviewing every new service and version or building a whole load of custom tools and workflows to do this. This isn't fair or sustainable; it just does not scale.

The more mature companies have alleviated this pain to some degree by spending the time documenting and evangelizing their API best practice, and setting the expectation with every developer that they have to read, internalize and follow that best practice. The intention here is a good one - you don't want developers to be submitting code that they themselves haven't checked for compliance because then you'll have even more rework to do.

But seeing success here is now 100% dependent on each and every developer reading and remembering these standards and manually making sure they do actually follow them. This makes it easier for the API platform team, but actually you're just shifting the burden of responsibility to developers, and you haven't made it any easier overall.

In a modern world of automation and self-sufficiency, we can and we should do better than this.

Konnect Enables APIOps

As the API designer/developer…

When designing your API in Insomnia - which has specific capabilities designed to support this APIOps model of end-to-end automation - your Swagger is instantly linted and validated so you know it follows industry best practices. You can build and generate your unit tests for the service functionality and still within the Swagger, prescribe various Kong policies or plugins that need to be applied to the endpoints to ensure that they'll be properly governed and secured. Then you can automatically generate the declarative config in Insomnia, giving you a way to instantly set up your local Kong gateway instance to quickly test your API before then using the Git Sync feature to commit and push your API to the right repo.

Outcome(s): API is governed at design time; instant local testing of design and governance

As the API platform owner…

Continuing on with our lifecycle, when someone makes a pull request (PR) in that repo, you know you have new code to review. For example, we have a brand new API design, or a developer has taken the Swagger from our previous step and now built the API implementation, and added this new code to the repo. We need to make sure that the API that's been submitted for review still meets our standards.

This is where things get really cool. You can use the Insomnia CLI, inso, to programmatically invoke all of the quality and governance checks available to the developers in Insomnia itself any time a PR is made. You can configure the checks (linting the spec, running the tests, generating the declarative config) to run in whichever order is best for your use case, pipeline stage and deployment target. And you can configure it so that the PR is only approved once all of the checks have been passed. No manual intervention required.

Outcome(s): New code is only merged into a repo once the quality and governance has been validated; everything is automatic and programmatic so you can do this at speed

As the API platform operator…

Completing the beauty of this workflow is the fact that you can programmatically upload that declarative config to the various modules and runtimes in Konnect (e.g., through CLIs like decK), and they will automatically configure themselves based on it. This part is taking all of the magic and benefits of GitOps when it comes to continuous deployment and applying them to the full API lifecycle.

Outcome(s): The declarative config automatically generated from a valid API spec enables fully automated - i.e., faster and repeatable - deployments and configurations in Konnect

Conclusion

This is an area we're investing in heavily at Kong. Seeing the struggles that so many companies are battling today with their service delivery mechanisms, the time and financial costs of QA teams conducting manual reviews, and the inconsistent service landscapes they're trying to productize (and failing as a result) shows us there is a lot more work to be done here across the industry. We are innovating further in this space, with more features planned because we want to take all of this pain away.

With APIOps, you don't have to sacrifice speed or governance. You can achieve both, in a sustained and automated way. Adopting an API-driven or microservices-based approach usually does mean a culture change, and the only way to make that culture change sustainable at scale is to replace what you had before with something that makes everybody's lives easier.

For developers, we've enabled them to be autonomous. We're empowering them to work in the way that makes them most productive because we're providing them with self-serve tooling they can use for the first round of governance checks. This removes all of the frustration and manual effort that we used to ask of our developers to follow best practice: Now, they can instantly be alerted about any changes that they need to make so they can do those changes straight away as well.

For API platform owners, we've given them a way to ensure 100% coverage of governance checks across every service that's being built and a way to ensure that all of these governance checks are immediate. So we've put in place the guardrails that ensure developer autonomy is safe, which means that everybody's happy.

One word of caution, however: Even if we've achieved the above and all our services are technically consumable, that doesn't guarantee they'll be reused. Achieving reuse broadly across your organization depends on a long-term mindset shift as part of this API and microservice culture change to one in which all of your stakeholders are motivated to discover and reuse existing services before building their own. Even if we've beautifully automated our API lifecycles to deliver a perfect service every time using APIOps, if your distributed teams are not aligned in their thinking that reuse is a necessary behavior for the benefit of the overall organization, you cannot drive this change in culture.

These services power digital experiences: connectivity liberates the data flow between our increasingly distributed systems and applications. It is no surprise, therefore, that COVID-19 has accelerated the adoption of microservices, with 89% of technology leaders worldwide saying that creating new digital experiences to address COVID-19 challenges are critical to the success of their business. To deliver the agility necessary to innovate like this at speed, services are built as digital assets - or products - for consumption, and if they aren't being used then they might as well not exist (in fact, they definitely shouldn't). It is not just a case of wasted investment then if we get this wrong; it is our survival.