In the landscape of service discovery, selecting the appropriate tools and frameworks is essential for enhancing system functionality and robustness. These solutions provide the necessary infrastructure to enable seamless communication and integration across distributed microservices environments.

- - Consul: A versatile tool renowned for its ability to facilitate service connectivity and governance. It offers a comprehensive suite of features, including service mesh capabilities and detailed health monitoring. [Consul's architecture is designed to support scalability and reliability](https://konghq.com/blog/engineering/implement-a-canary-release-with-kong-for-kubernetes-and-consul)Consul's architecture is designed to support scalability and reliability, making it suitable for extensive and dynamic service environments.

- - Eureka: A distinguished service registry in the Spring Cloud suite, Eureka aids in managing service instances effectively. Known for its straightforward integration with Spring Boot, it offers a streamlined experience for handling service registrations and deregistrations, ensuring smooth client-side discovery processes.

- - Etcd: This robust key-value store is optimized for distributed systems requiring dependable data consistency and synchronization. Etcd supports complex coordination tasks through its transactional model, providing a sturdy foundation for service registries that demand precision and resilience.

- - Kubernetes: Integrated deeply into its orchestration capabilities, Kubernetes simplifies the service discovery process by automating the creation and management of service endpoints. Its DNS-based service discovery facilitates efficient communication within containerized applications, ensuring that services remain discoverable and accessible without manual intervention.

### Integration with Spring Boot

For developers within the Spring Boot ecosystem, service discovery becomes more straightforward through Spring Cloud's robust support mechanisms. The framework abstracts complex discovery operations, providing intuitive APIs that facilitate seamless communication. This integration allows developers to focus on core application logic, leveraging established discovery protocols to manage network interactions efficiently.

### Kong Mesh's Approach

[Service discovery within Kong Mesh](https://konghq.com/products/kong-mesh)Service discovery within Kong Mesh exemplifies a sophisticated approach to managing service interactions in dynamic environments. Integrating advanced protocols, it ensures efficient registration and communication, supporting the scalability needed in modern architectures. This approach underscores the importance of adopting flexible discovery solutions that integrate smoothly with existing infrastructures, providing a cohesive framework for comprehensive service management.

## Best practices and considerations

Implementing service discovery effectively requires adherence to a set of best practices designed to enhance system reliability and performance. These practices focus on maintaining a resilient architecture capable of adapting to network variability and service dynamics, thereby ensuring consistent and efficient operation.

Opt for a service registry that ensures robust fault tolerance and operational continuity. This choice is crucial for minimizing service disruptions in dynamic environments. By employing a system architecture that distributes registry responsibilities, service discovery processes remain unaffected by individual node failures, thus guaranteeing uninterrupted service availability.

### Key strategies for robust discovery

To ensure network accuracy, employ comprehensive validation checks. This approach verifies the operational status of service instances, ensuring that only those meeting specific criteria remain accessible. Establish a multi-faceted validation framework that examines various operational parameters, contributing to reliable service interaction.

Implementing a mechanism to store frequently accessed service mappings within the service instances themselves can significantly enhance response efficiency. This practice reduces dependency on external queries, thereby optimizing performance under standard operating conditions. By enabling swift access to necessary network mappings, services can interact more fluidly and effectively.

### Security and observability

In safeguarding service interaction, prioritize robust data protection protocols. Implement measures that ensure data confidentiality and integrity across service communications. This includes establishing secure channels and employing rigorous authentication measures, fortifying the network against potential vulnerabilities.

Enhance system transparency by establishing a thorough event tracking system for service interactions. This system should capture detailed records of service communications, providing invaluable insights for diagnosing and resolving network issues. By maintaining comprehensive oversight, development teams can swiftly address irregularities, ensuring smooth and continuous service operations.

## Implementing Service Discovery in Your Application

Integrating service discovery into your application enhances the ability to manage and scale microservices efficiently. Spring Boot applications can leverage advanced frameworks to facilitate comprehensive service discovery mechanisms. These tools allow developers to implement dynamic service interactions that optimize network management and resource allocation.

### Spring Boot Example

For developers working with Spring Boot, incorporating service discovery involves a few essential steps. Begin by extending your project with dependencies that support service registration and discovery, such as those provided by Spring Cloud. Configure your application to interact with your selected service registry by defining the necessary settings in the configuration files. This connection ensures that your application becomes part of a dynamic ecosystem where service locations are managed automatically.

Upon initialization, your Spring Boot application can register itself with the service registry, thus becoming accessible to other networked services. This configuration supports dynamic routing and load balancing, enabling efficient service request management without manual oversight. By following these procedures, developers can effectively implement service discovery within their Spring Boot applications, ensuring seamless service interactions.

### Common Pitfalls and Tips

Implementing service discovery may involve navigating several challenges, but recognizing common pitfalls can help mitigate these issues. One frequent obstacle is the inadequate adaptation of configurations to specific deployment environments. Tailor settings to meet the unique demands of your operational context to ensure optimal performance and compatibility.

To maintain consistency and prevent disruptions, conduct regular audits of application configurations and registry entries. This practice helps ensure that service discovery mechanisms function correctly across different environments. Additionally, implement robust observability practices to quickly identify and resolve anomalies in service behavior.

Incorporating strategies to handle unexpected service outages is crucial for maintaining system stability. Equip applications with fallback mechanisms and adaptive response strategies to manage temporary service disruptions. By doing so, applications can remain resilient and maintain operational continuity even in the face of individual service instance failures.

## Sevice Discovery FAQs

### What is service discovery and how does it work?

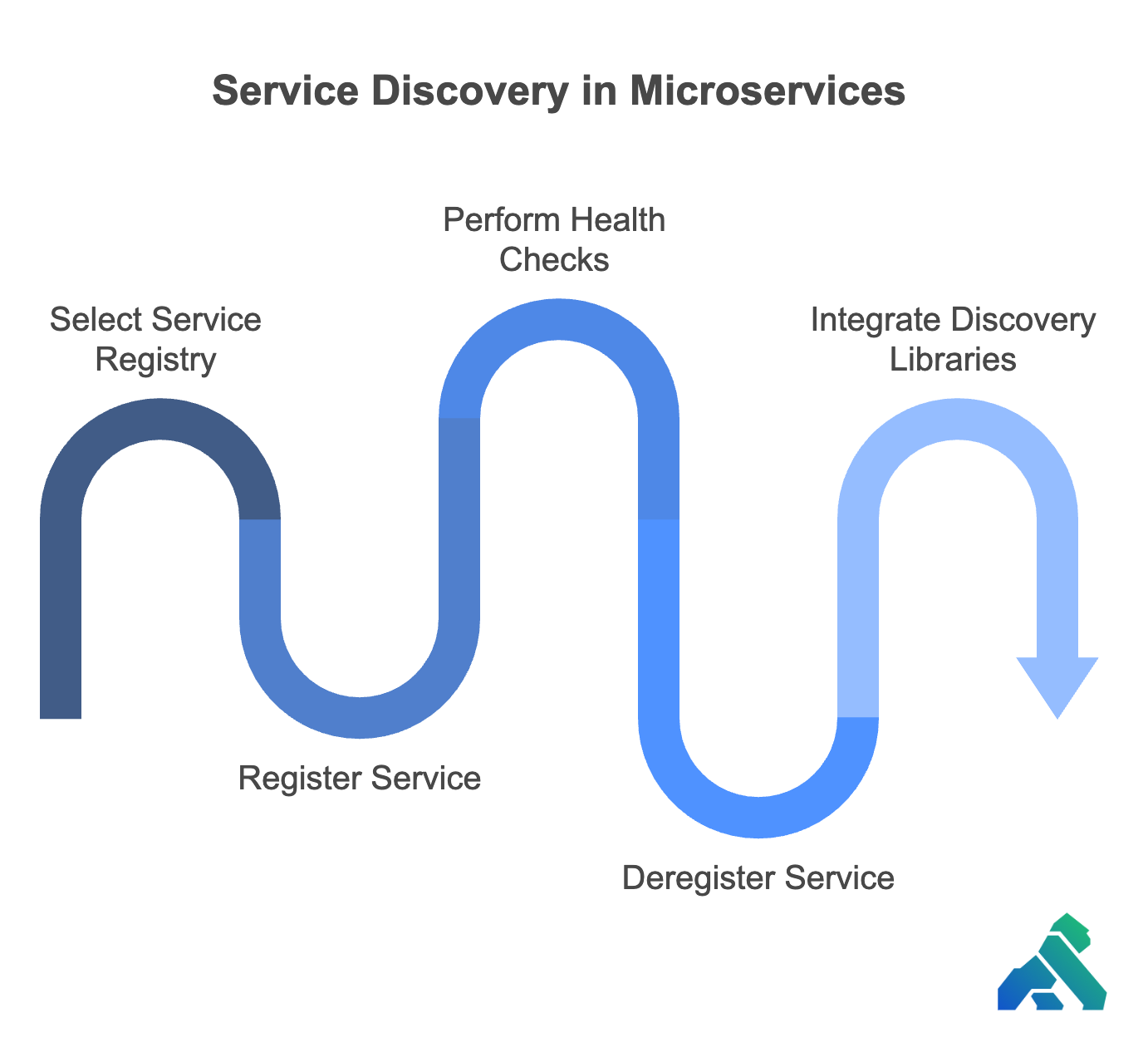

Service discovery serves as a foundational element in orchestrating interactions between microservices, streamlining the identification and integration of service endpoints in a network. It operates through a centralized registry that dynamically tracks available service instances, allowing for flexible communication paths. By continuously updating the registry, service discovery maintains an accurate representation of the network, ensuring efficient service connectivity without static configurations.

### Why is service discovery important in microservices?

Service discovery plays a pivotal role in the microservices ecosystem by facilitating seamless communication across distributed systems. It addresses the challenges of managing dynamic network configurations, enabling services to scale and evolve without manual intervention. This adaptability supports the independent evolution of services, enhancing the overall agility and robustness of the architecture.

The selection of service discovery tools should align with the specific operational demands of your system infrastructure. Tools such as Consul and Eureka offer robust capabilities for managing service endpoints, with features designed to support varied deployment scenarios. These tools provide integrated health monitoring and discovery mechanisms, ensuring reliable and scalable service interactions.

### How can I implement service discovery in my application?

To implement service discovery, integrate a service registry solution that suits your architecture's demands for scalability and reliability. Configure your application to dynamically register service instances and perform health checks to maintain registry accuracy. Utilize frameworks that facilitate seamless integration with service registries, ensuring efficient communication and consistent performance across diverse environments.

### What are the common patterns for service discovery?

Service discovery is typically implemented using two main patterns, each optimizing different aspects of service communication. Client-side discovery gives clients the ability to manage service instance retrieval and load balancing, offering flexibility in directing service requests. Conversely, server-side discovery centralizes these functions in a load balancer, simplifying client operations and enhancing system efficiency. These patterns may be adapted or combined to suit specific architectural needs, promoting resilient service exchanges.

### Conclusion

The exploration of service discovery unveils a complex interplay of components that shape the efficiency and reliability of microservices architectures. Beyond basic connectivity, service discovery orchestrates the dynamic relationships between services, ensuring that systems can evolve seamlessly within their operational environments.

Each aspect of service discovery—from the meticulous configuration of service registries to the strategic implementation of discovery protocols—contributes to a sophisticated network of interactions. These elements ensure that services are not only agile but also capable of adapting to the rapid changes characteristic of modern distributed systems. This continuous refinement process keeps pace with evolving application demands, leveraging technological advancements for enhanced operational efficiency.

As we delve into the intricacies of service discovery, we uncover a transformative potential that redefines service interaction and performance. The tools and frameworks at our disposal serve not only as facilitators of communication but also as enablers of innovation. By integrating these technologies, organizations are poised to advance their digital transformation journeys, ready to deliver applications that are robust, responsive, and prepared for future challenges.

As you embark on your journey to build scalable and resilient microservices architectures, remember that service discovery is a critical component that enables seamless communication and adaptability. By leveraging the right tools, frameworks, and best practices, you can unlock the full potential of your distributed systems and drive innovation in your organization. If you're ready to take your API management to the next level, we invite you to[ request a demo](https://konghq.com/contact-sales) request a demo to explore Kong's API platform capabilities and discover how we can help you achieve your goals.