# Reasons to Use an API Gateway

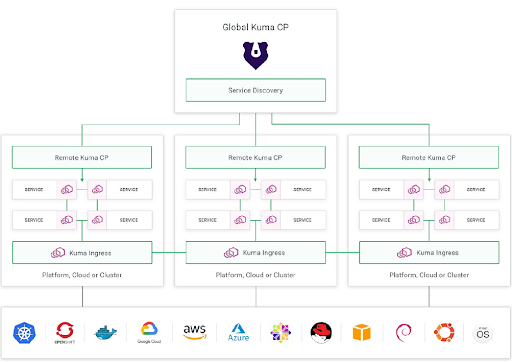

Organizations are increasingly adopting microservices for the architectures inherent flexibility and scalability, but to fully realize the benefits of a microservices approach, you need an API gateway. A microservice -based system can consist of do

[](https://konghq.com/blog/learning-center/api-gateway-uses)