“Gateway Mode” in Kuma and Kong Mesh

Introduction

One of the most common questions I get asked is around the relationship between Kong Gateway and Kuma or Kong Mesh. The linking between these two sets of products is a huge part of the unique “magic” Kong brings to the connectivity space.

In this blog post and the video below, we’re going to jump right into breaking down the relationship between these products and how you can use them together. First, let’s break down a couple of the terms that are involved.

Skipping to the End

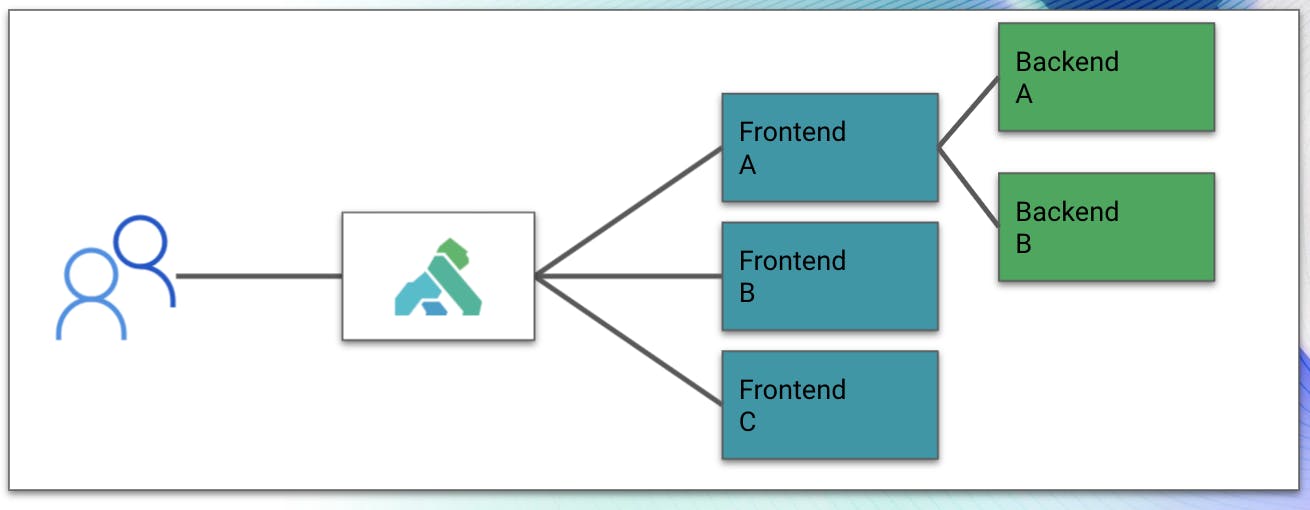

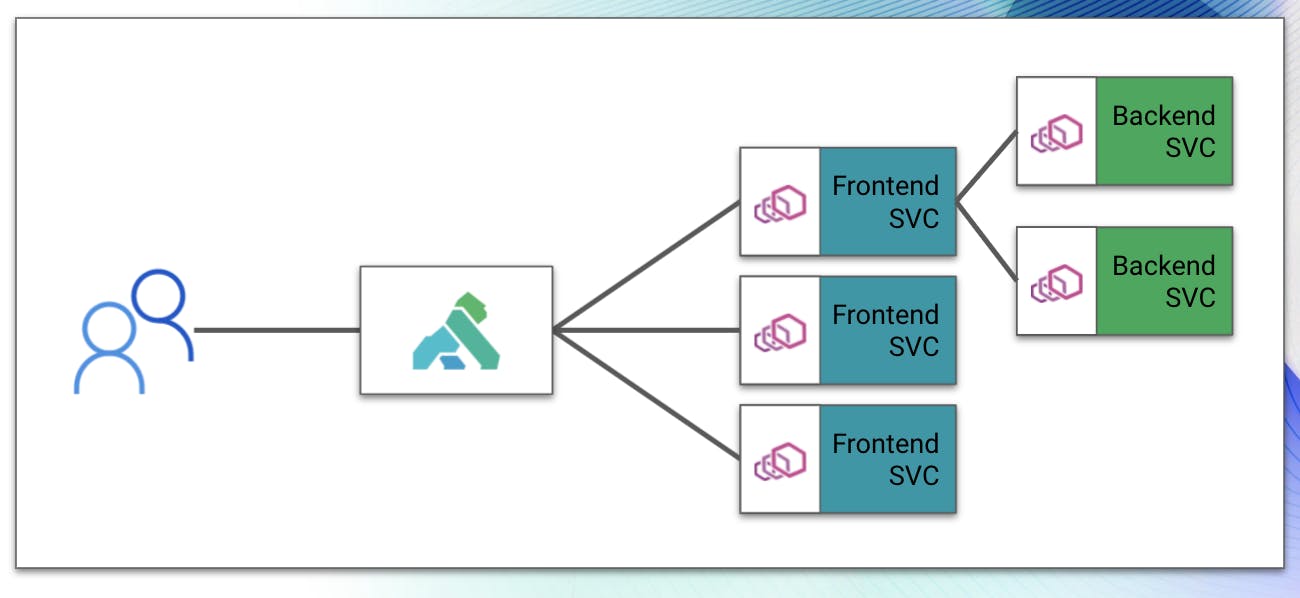

The core of this blog post is about how Kuma and Kong Mesh allow you to use ANY API gateway as a “doorway” into the service mesh. Further down, we’re going to step through the concepts of how these components talk with each other. For now, let’s talk about our service mesh gateway mode.

Kuma and Kong Mesh ship with a gateway mode that disables the external Envoy listener for the service, making it resolvable inbound. We can bolt on any API gateway (ideally, Kong Gateway, as mentioned above) as our entry-point into the mesh using this functionality. This functionality requires that you’ve deployed your API gateway in a way that gives it an Envoy sidecar service, acting as the data plane.

In Kuma/Kong Mesh’s Kubernetes mode, this is handled through a specific set of annotations that are applied to your deployment YAML (as part of the deployment object or pod object:

Note: The sidecar injection can be applied in the deployment/pod object or against the namespace as a whole. If it’s deployed at the namespace level, you can omit the annotation here.

In Universal mode, we handle this configuration through the kuma-dp configuration file:

The kuma-dp process is the actual sidecar process executing, so there is no need to include an injection annotation like in Kubernetes.

With these configurations applied, the service in question will be marked in Kuma/Kong Mesh as an API gateway service and allow inbound connectivity.

Understanding Kong Gateway

Kong is most widely known for delivering the most powerful API gateway in the market today. This API gateway provides the smoothest process to get workloads into a given environment, whether it’s a mesh environment or otherwise. The API gateway acts as the user’s front door to consuming everything from APIs to general web content.

On top of that, Kong Gateway has an extremely flexible deployment model allowing it to be deployed on public cloud workloads, virtual machines and Kubernetes environments. You can manage it in several different ways. The most common ways are either directly through Kong’s RESTful API, declaratively using decK or Konnect, Kong’s SaaS connectivity platform.

One of Kong Gateway’s strongest pieces of functionality is its plugin marketplace. These plugins allow users to greatly extend the functionality of the API gateway to include things like key authentication, rate limiting, open policy agent and more. This functionality makes it extremely attractive to leverage Kong Gateway in a service mesh environment as we can now expose advanced capabilities inbound as well as through the actual service mesh itself.

Kong Ingress Controller

Kong Ingress Controller (KIC) is based on Kong Gateway and designed to operate in a Kubernetes-native way. It deploys ready-to-go for service mesh functionality and can be used to quickly get started with an inbound gateway in your environment. In a future release, we will include this functionality in the kumactl install commands.

KIC comes configured out-of-the-box to act as a gateway (the kuma.io/gateway: enabled annotation is already applied), so you can get started using it with Kuma/Kong Mesh quickly. To leverage KIC with a single-site mesh environments, you’ll want to reference the existing Kubernetes service name for the service registered in Kuma as the backend for the ingress. Kuma requires that you create a service whenever a mesh object is deployed. You can see an example of this ingress spec below.

For multi-zone environments, you’ll need to create a Kubernetes service that references the services .mesh address. This is covered in the KIC documentation, and I’ve provided an example of this Kubernetes configuration below.

In this example, you can see that I’m creating a service named front that references an ExternalName object that is frontend.kong.svc.80.mesh. This service tells the Kubernetes cluster that any request coming into the front service name should ultimately reference the external name we’ve specified. This name is a .mesh address, which will send traffic through KIC’s Envoy sidecar. This resolution comes from Kuma or Kong Mesh’s internal DNS.

Kuma and Kong Mesh – Beyond the API Gateway

If Kong Gateway and KIC act as the “front door,” Service Mesh is the “office building” (many offices, interconnected). Kuma (the open-source, Envoy based service mesh that Kong donated to CNCF) and Kong Mesh (enterprise edition of Kuma with added features for the enterprise) enable users to connect workloads in a way that puts security and automation first, enabling capabilities like advanced traffic routing, observability and self-healing out-of-the-box.

At a high level, service meshes work by moving the connectivity logic outside of the application and feeding it through proxies that live alongside the application. We commonly refer to these as sidecars or data planes. When we move the communication logic into these proxies, it allows us to add additional capabilities on top of that communication. We get capabilities like traffic routing control. We can get a greater level of visibility into the workload communication patterns (observability), we can encrypt traffic end to end influence the application communication via policies.

These proxies (sidecars/data planes) receive their configuration from the control plane of the service mesh. When we apply policy or configuration details, those configurations are read into the service mesh, translated to configurations that Envoy understands and delivered to the proxies in the environment.

In addition, the control plane also delivers connectivity details for other proxies in the environment – enabling the proxies to communicate with each other. This interaction model is the core of the “decentralized” model that we talk about in service mesh, and it’s one of the strongest aspects of the platform.

Better Together

We’ve built Kuma and Kong Mesh to be highly extensible, so you can integrate the tools you have already operationalized in your environment with service mesh. Fronting service mesh with Kong Gateway will give you the greatest level of flexibility and functionality as you look to create end-to-end connectivity in your environment.