Engineering organizations building modern API-driven systems have different priorities when it comes to their API management solution. These priorities will drive design decisions about the deployment of various components for API gateways.

Some organizations are looking to optimize compute resource utilization, helping to control costs or reduce operational toil. Other organizations, like government agencies or financial institutions, require enforcement of strict privacy and informational boundaries. Some groups are looking to limit central operational burdens while maintaining central governance over API deployments.

Kong provides multiple solutions to address your API gateway platform requirements. [Kong Enterprise](https://docs.konghq.com/gateway/latest/kong-enterprise/)Kong Enterprise and [Kong Konnect](https://docs.konghq.com/konnect/)Kong Konnect make up the on-premises and SaaS-hosted products respectively. This article explores a variety of options you have in deploying the components of both products and discusses trade-offs across various deployment strategies.

## Gateway Planes

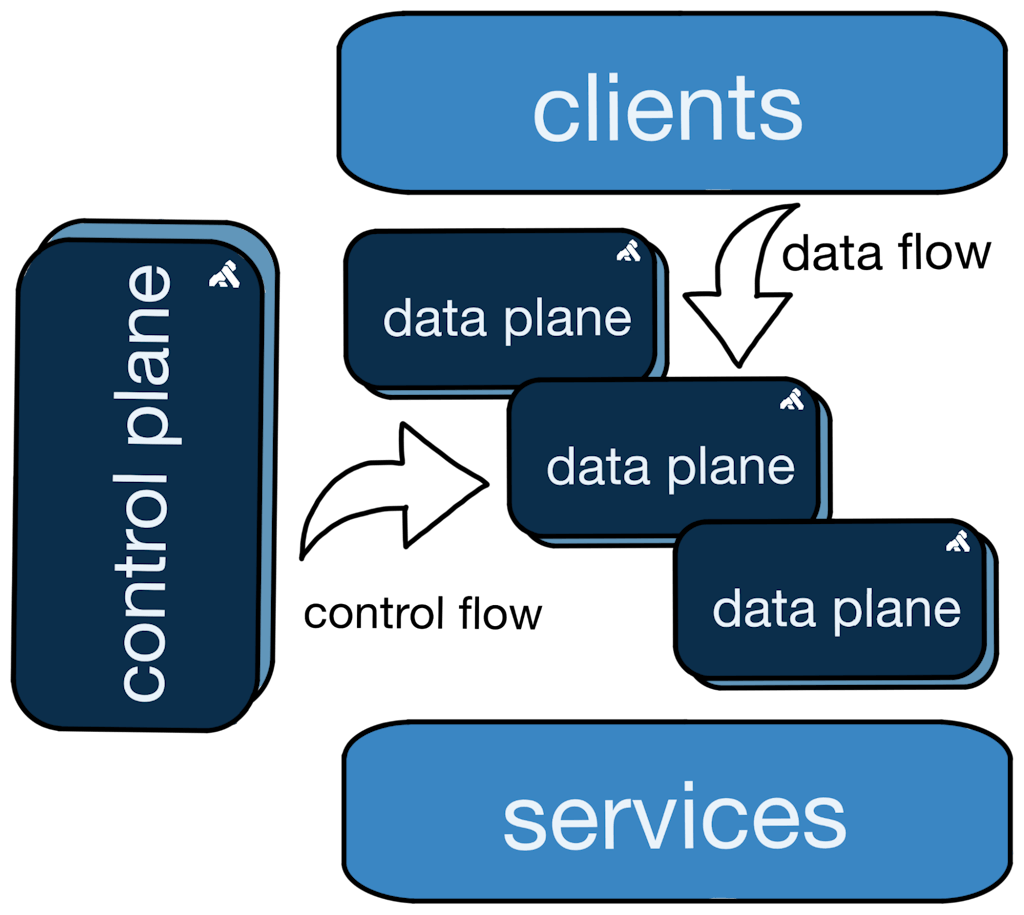

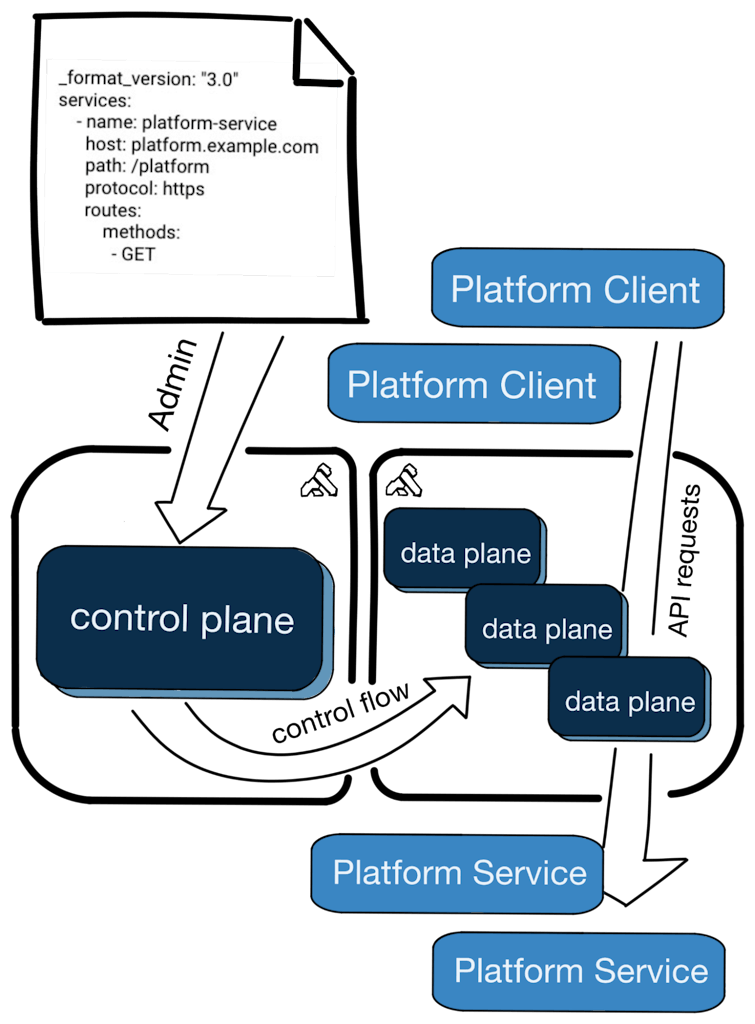

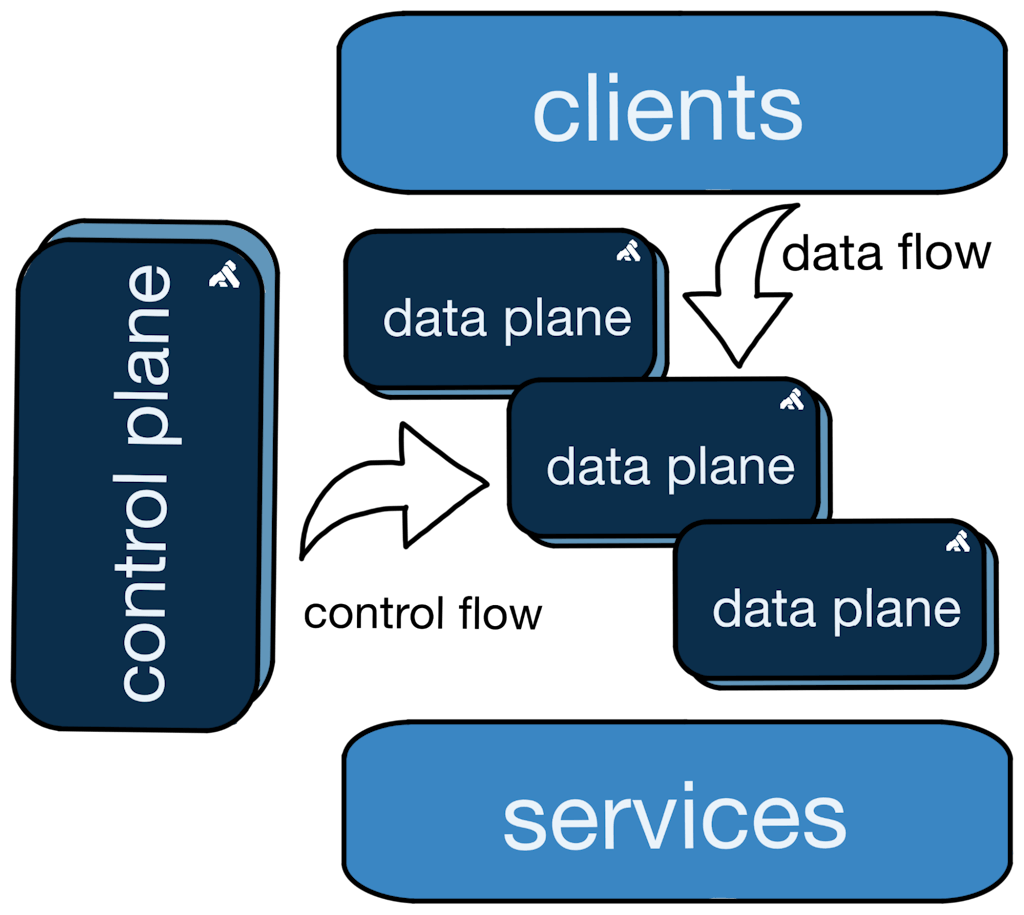

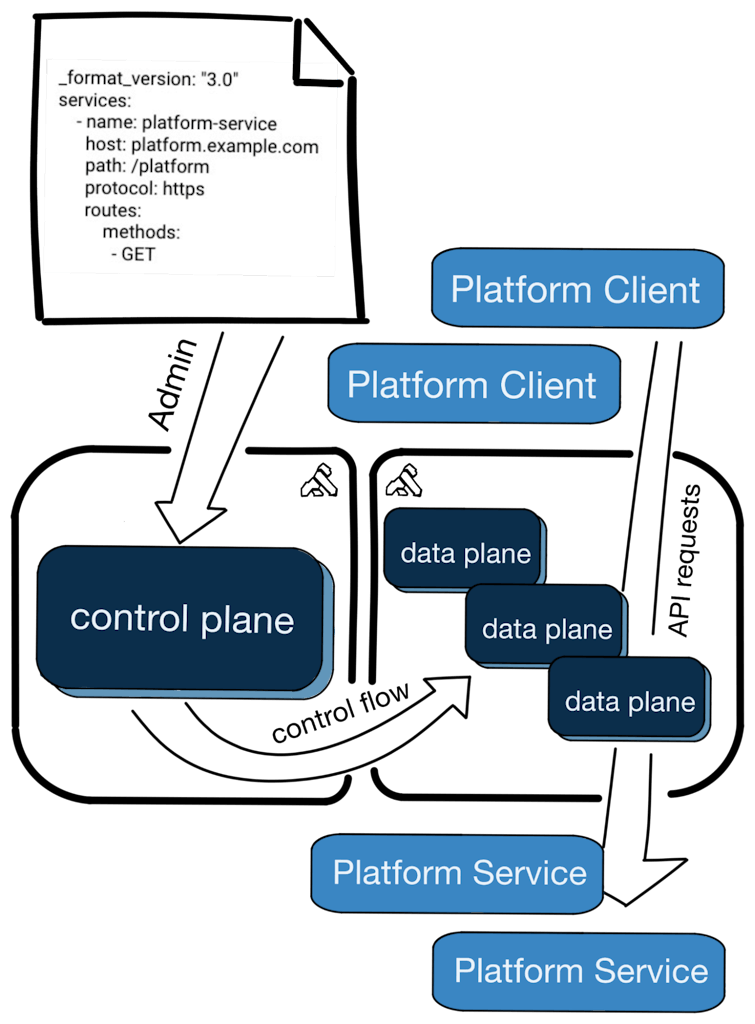

All Kong Gateway deployments are made up of two main components: a control plane (CP) and a data plane (DP).

Control planes serve the following key functions in the API platform:

- - ***Configuration change management***: Control planes provide validation, governance, audit, and distribution of configuration to data planes.

- - ***Configuration storage isolation and resilience***: Data planes have ephemeral lifetimes and may fail or be horizontally scaled to handle variable request volumes. The control plane frees the data plane from the concern of durable configuration storage.

Data planes serve one main function:

- - **Routing client traffic:** Data planes are the critical path for client requests. They must be reliable, responsive, and secure.

Kong Gateway supports a variety of [deployment topologies](https://docs.konghq.com/gateway/latest/production/deployment-topologies/)deployment topologies. In some cases, control and data planes are run as a single process, but often they are run independently, in [Hybrid Mode](https://docs.konghq.com/gateway/latest/production/deployment-topologies/hybrid-mode/)Hybrid Mode. Hybrid Mode decouples the control and data planes, allowing for scale, resiliency, security, and optimized resource usage. How you choose to deploy these components will depend on your specific organization's priorities and capabilities. Most non-trivial deployments benefit from the benefits of Hybrid Mode, and that's the deployment topology we'll focus on in this article.

Before looking at the deployment options, let's recap software tenancy and Kong configurations.

## Kong and Tenancy Designs

Your organization is likely made up of teams that develop independent services accessed by APIs. Additionally, organizations typically have centralized operational teams that support the development teams by managing compute resources and ensuring adherence to company policies. API gateways help operational teams by centralizing client API access (via the data plane) and help monitor and govern API behavior (via the control plane). API Gateways help the development teams by abstracting away common API management concerns, like access controls, rate limiting, or other governance concerns. This frees the development teams to focus on business use case development.

From the central operational team's perspective, these independent development teams can be viewed similarly to *tenants* of a building. Each team is occupying a portion of the shared company resources and should comply with company policies.

### Tenancy

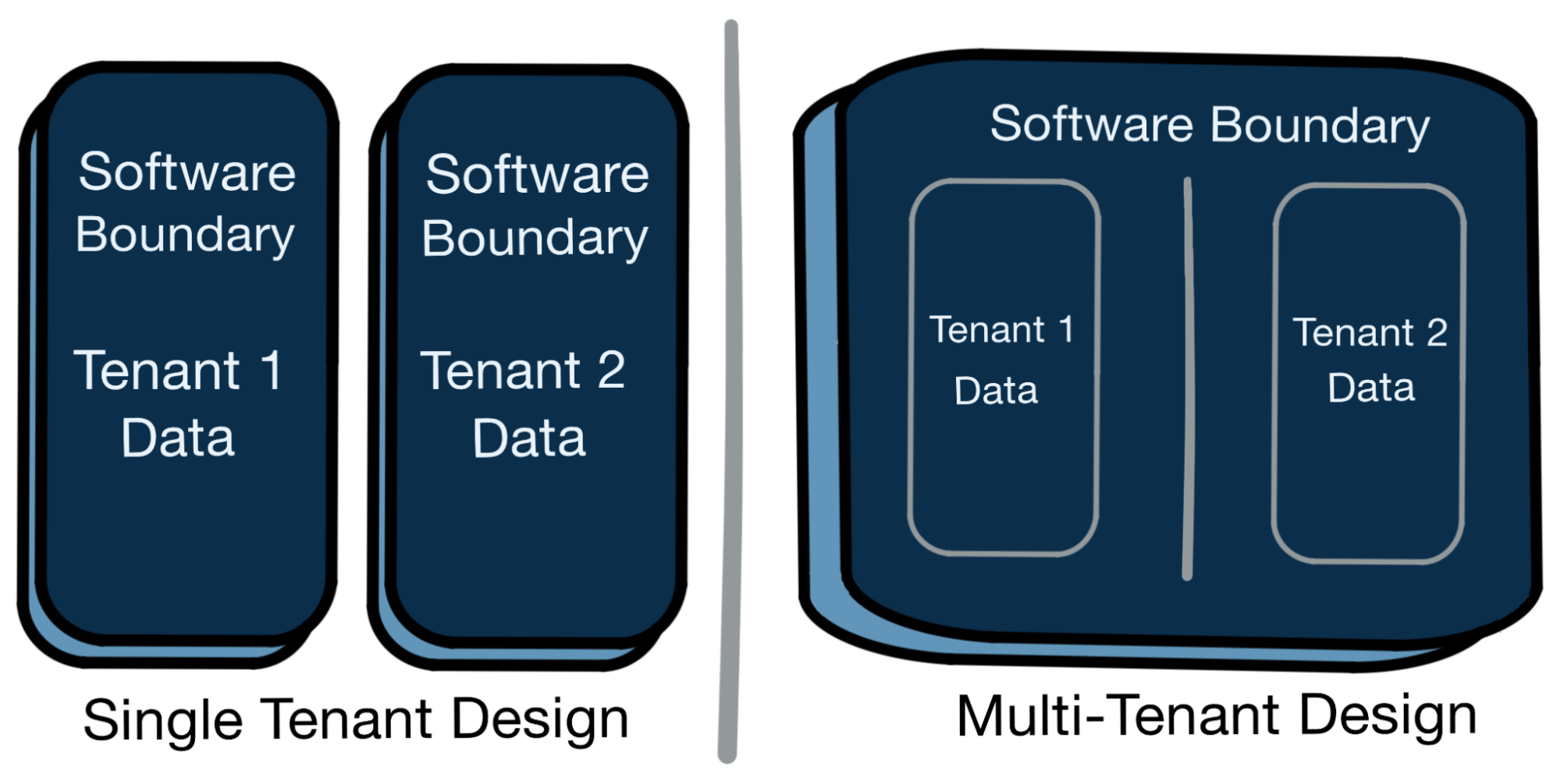

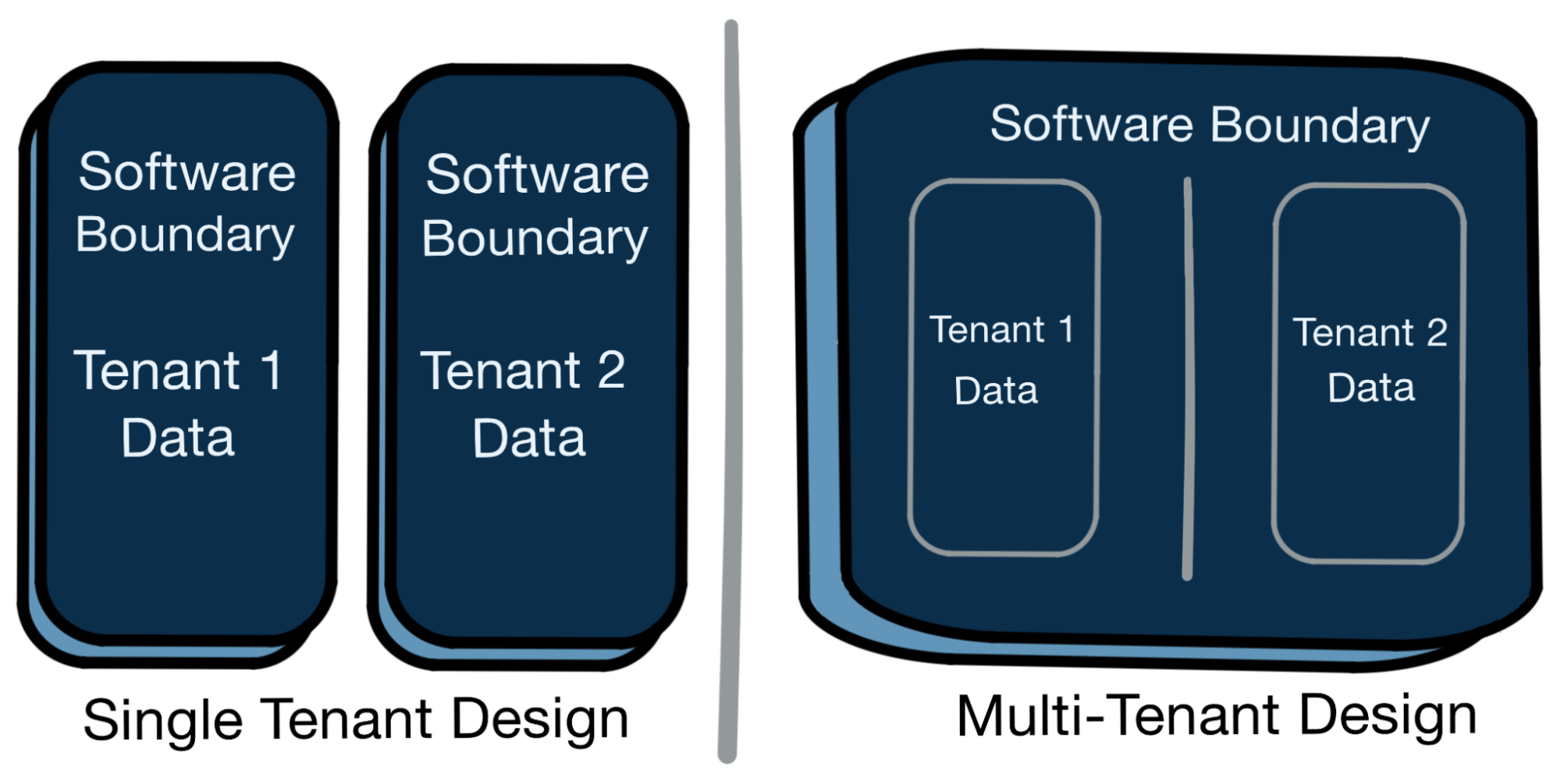

Traditionally, software was designed with a single tenant in mind. That is, one process or component was typically designed to host one user or customer. As distributed systems and cloud computing have become more prevalent, software architectures have begun to build the concept of multi-tenancy directly into products. Single vs multi-tenancy is determined by the positioning of the software boundary in relation to user data.

The table below highlights some of the tradeoffs in these tenancy designs.

##### Single Tenancy

- - *Strongest tenant data protection*: With no direct access between tenant data, single tenant designs can help prevent unintended exposure of information across users or customers.

- - *Higher operational burden*: More software processes typically means managing more machines, connections, and supporting resources.

- - *Potential resource underutilization*: For "always on" systems, like APIs, compute must be allocated and ready for use on demand. With single tenancy, resources are less dynamic and may sit idle during low-demand periods.

##### Multi-Tenancy

- - *Weaker tenant data protections:* When software hosts multiple users’ data within one process, there's only a soft boundary that must be preserved.

- - *Lower operational burden*: Fewer software processes typically means managing fewer machines, connections, and supporting resources.

- - *Potential resource optimization*: Multiple tenants can be "packed" into available resources, which may be more optimal depending on demand across the tenants. As a tradeoff, resources are also constrained by other users within the shared software boundary. This is often referred to as the "noisy neighbor" problem.

Generally, single-tenant solutions promote stronger data segregation and reduce noisy neighbor concerns, at the cost of more operational overhead. Multi-tenant solutions allow for greater resource utilization and potentially reduce operational toil, while securely commingling tenant data within the software boundaries. Which strategies you choose depends on your priorities and engineering organization structures.

### Kong Gateway Configuration

The Kong Gateway configuration is the main system that defines the gateway's runtime proxying behavior. This configuration includes [services](https://docs.konghq.com/gateway/latest/key-concepts/services/)services, [routes](https://docs.konghq.com/gateway/latest/key-concepts/routes/)routes, [plugins](https://docs.konghq.com/gateway/latest/key-concepts/plugins/)plugins, and other objects that determine the behavior of the gateway while routing client traffic.

In Hybrid Mode, *all* connected data planes receive the *full configuration* from their connected control plane. This design allows the data plane nodes to be *fungible*, meaning any node in the cluster can serve traffic for any client (assuming network connectivity). There are, however, tradeoffs to this design, and how you organize your Kong Gateway configurations will largely depend on the deployment strategies you choose.

The following sections go into detail on common strategies and their tradeoffs.

## Deployment Strategies

We're going to look at the different deployment strategies for Kong Gateway. We'll break these strategies down by the combination of tenancy in both the control and data planes.

### Single Tenant CP / Single Tenant DP (Kong Enterprise Default Model)

Single tenant control and data planes are the default behavior in Kong Gateway. For single tenancy, designing the gateway configuration is straightforward, as you don't need to be concerned with logical separation of objects within the configuration. Each configuration supports one tenant, and every deployed data plane will receive the full configuration. Every data plane node can route traffic for every client (assuming network connectivity).

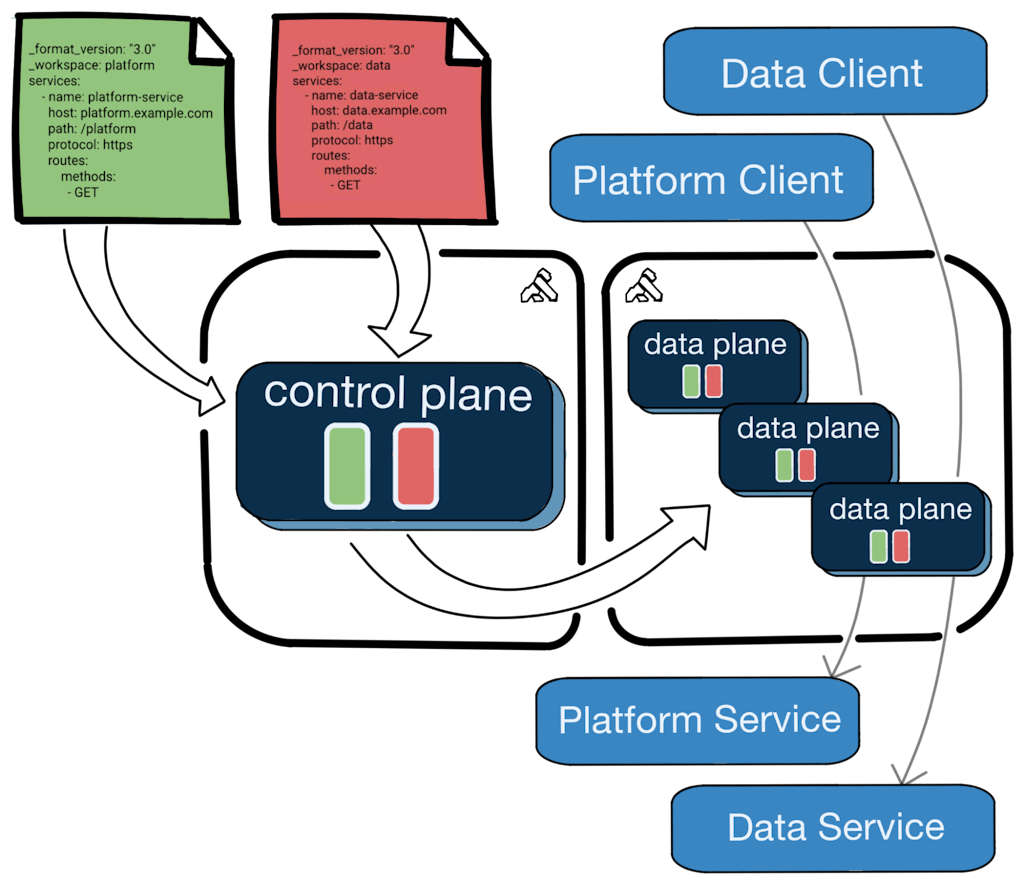

An example single tenant hybrid-mode deployment diagram is straightforward:

In this model, you'll be required to manage a full deployment for each tenant in your organization. Every tenant added in this model will incur direct increases in actual compute expense and indirect added expense in operational burden. In return, each tenant will have a full dedicated deployment that includes allocated data plane compute and a fully isolated control plane configuration.

The documentation provides instructions on [deploying Kong Gateway in Hybrid Mode](https://docs.konghq.com/gateway/latest/production/deployment-topologies/hybrid-mode/setup/)deploying Kong Gateway in Hybrid Mode.

### Multi-tenant CP / Multi-tenant DP (Kong Enterprise Workspaces Model)

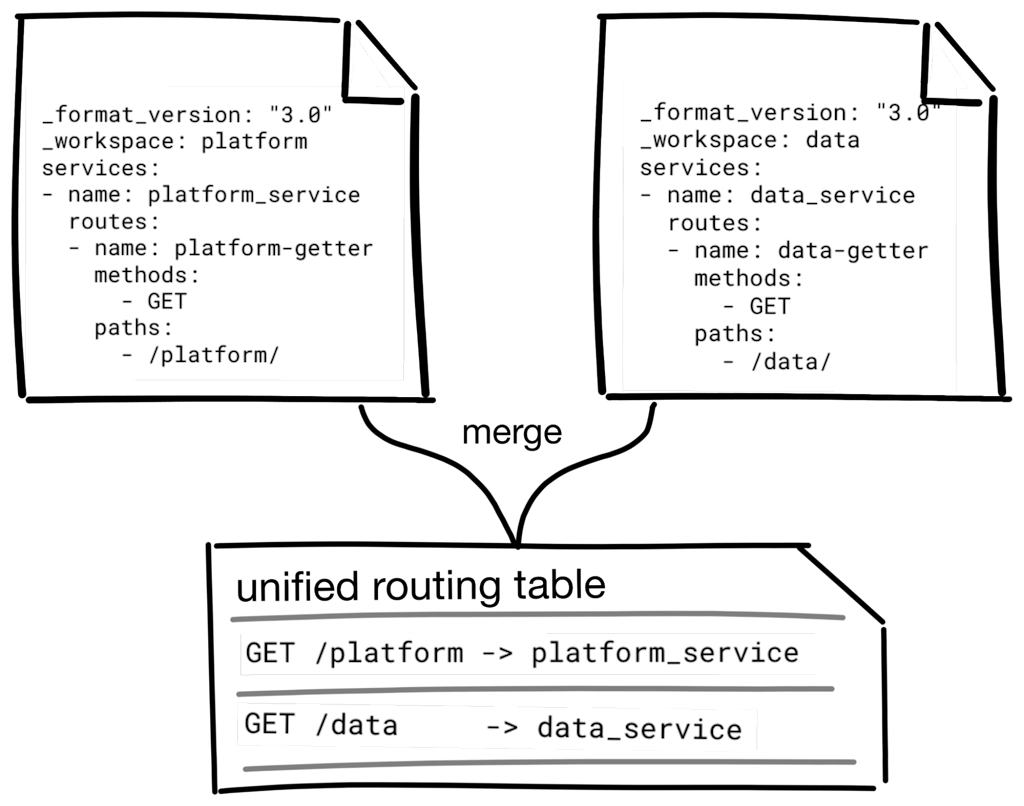

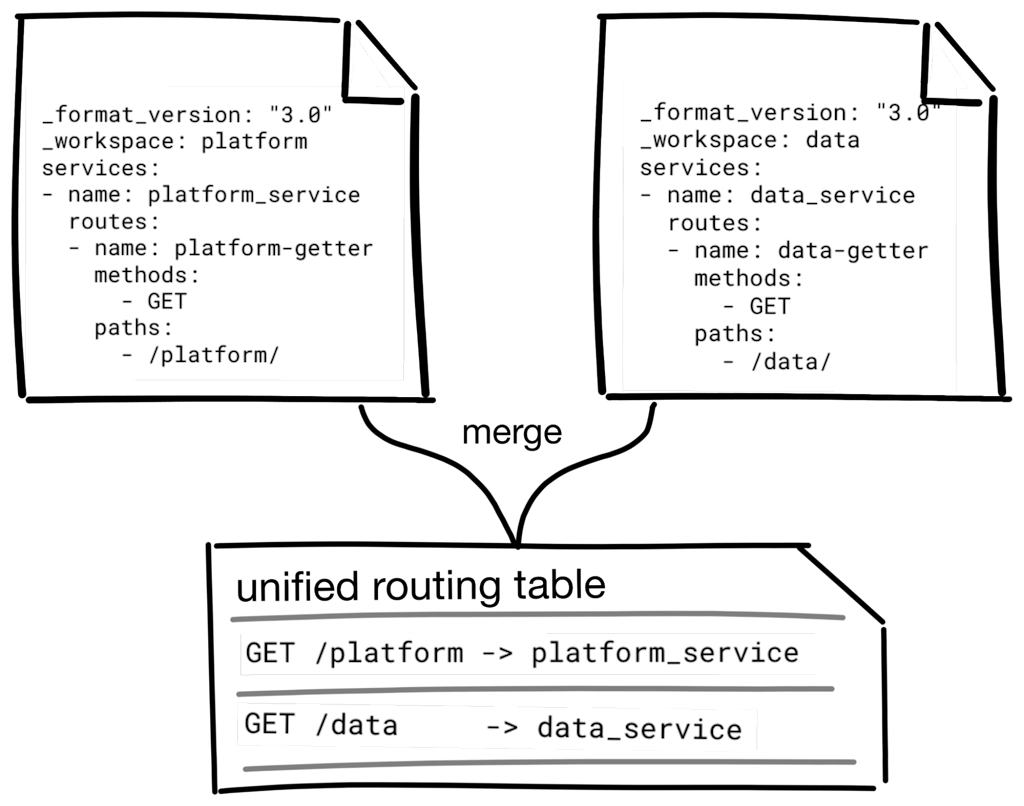

In Kong Enterprise, multi-tenancy is supported with [workspaces](https://docs.konghq.com/gateway/latest/kong-enterprise/workspaces/#main)workspaces. Workspaces provide an isolation of gateway configuration objects while maintaining a unified routing table on the data plane to support client traffic.

How you design your workspaces is largely influenced by your specific requirements and the layout of your organization. You may choose to create workspaces for teams, business units, environments, projects, or some other aspect of your system.

When pairing workspaces with [RBAC](https://docs.konghq.com/gateway/latest/production/access-control/enable-rbac/)RBAC, Kong Gateway administrators can effectively create tenants within the control plane. The gateway administrator creates workspaces and assigns administrators to them. The workspace administrators have segregated and secure access to only their portion of the gateway configuration in [Kong Manager](https://docs.konghq.com/gateway/latest/kong-manager/#:~:text=Kong%20Manager%20is%20the%20graphical,Create%20new%20routes%20and%20services)Kong Manager, the [Admin API](https://docs.konghq.com/gateway/latest/admin-api/)Admin API, and the declarative configuration tool [decK](https://docs.konghq.com/deck/latest/)decK.

When workspaces are in use, the shared data plane routes client traffic based on a unified routing table built from the aggregate of all the tenants’ workspaces. Configuring Kong entities related to routing, such as services and routes, may alter the client traffic routing behavior of the data plane. Kong Gateway attempts to ensure that the routing rules don't contain conflicts before applying them to the data plane, but as you'll see, this isn't always straightforward.

For example, two separate tenants may desire to have an API route path matching /users. This won't work with a shared data plane — the gateway does not know which upstream service should receive the traffic to /users. When an administrator attempts to modify a configuration object that will change the routing behavior, Kong Gateway will first run the internal router and determine if a conflict exists with any existing routes or services. If a conflict exists the request is rejected prior to modifying the routing rules of the shared data plane.

Kong Gateway supports routing rules [based on regular expressions](https://docs.konghq.com/gateway/latest/key-concepts/routes/#regular-expressions)based on regular expressions, which complicates the route collision detection mechanism when workspaces are in use. Regular expressions can't be fully evaluated by the conflict detection algorithm to prevent all collisions. The [documentation on Workspaces contains details](https://docs.konghq.com/gateway/latest/kong-enterprise/workspaces/)documentation on Workspaces contains details on how conflicts are detected across, and within, workspaces.

The following architecture diagram shows the individual tenant administrators managing their own configurations over a single control plane. The connected data planes maintain the configured objects within workspaces (tenants), and routes all client traffic through a unified routing table:

In this model, you can save operational overhead by sharing resources across your tenants. However, each tenant's configuration will be shared across the data plane’s compute, and careful usage of Kong's RBAC facility will be required. Included in this shared configuration may be secret data — for example, TLS certificate keys, which are highly sensitive and must be secured. Detailed later in this article is the Kong Enterprise secret reference feature that allows for indirect loading of secret values, which can help mitigate this risk.

Workspaces are discussed in detail in the [Kong Enterprise documentation](https://docs.konghq.com/gateway/latest/kong-enterprise/workspaces/)Kong Enterprise documentation.

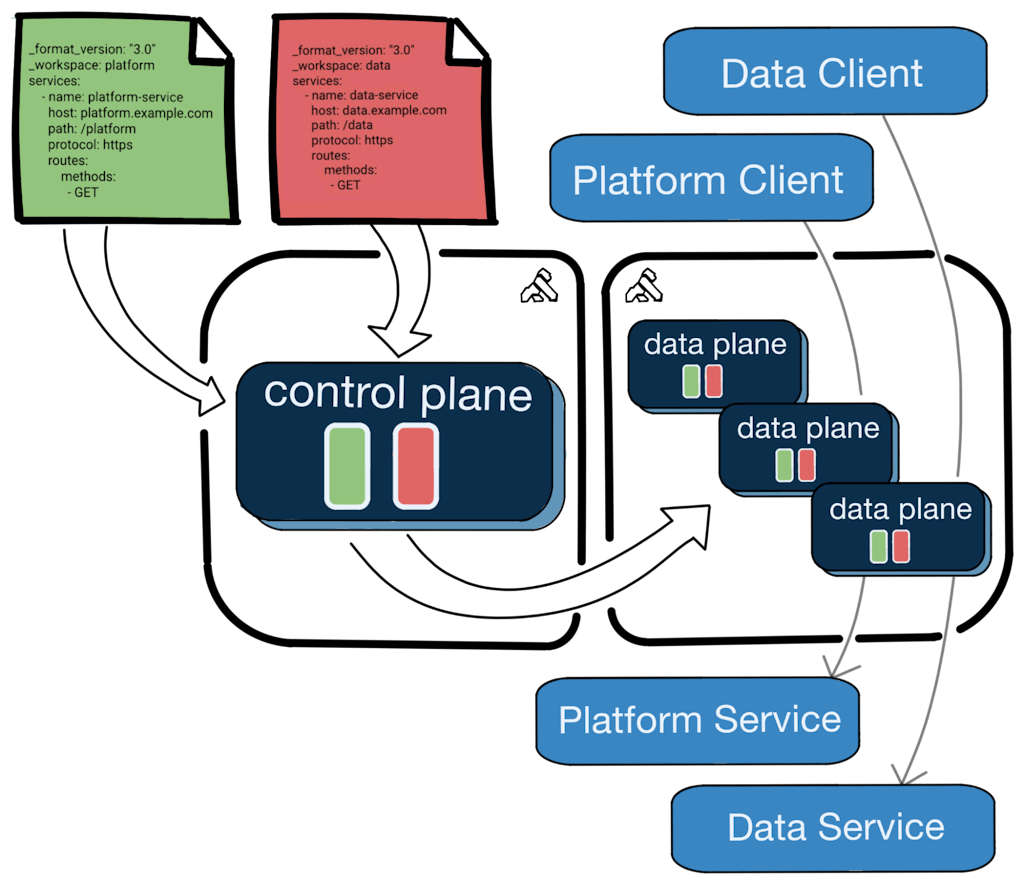

### Multi-tenant CP / Single Tenant DP (Kong Konnect Runtime Group Model)

Kong Konnect is an end-to-end SaaS API lifecycle management platform. Included in Kong Konnect is [Runtime Manager](https://docs.konghq.com/konnect/runtime-manager/)Runtime Manager, a fully hosted, cloud native gateway control plane management system. Using Runtime Manager, you can provision virtual control planes, called [Runtime Groups](https://docs.konghq.com/konnect/runtime-manager/runtime-groups/)Runtime Groups, which are lightweight control planes that provision instantly and provide segregated management of runtime configurations.

Before continuing on with details on the Kong Konnect model, what are some reasons engineering teams want a multi-tenant control plane paired with single-tenant data planes? Generally, this deployment strategy allows for centralized control over shared API gateway configurations, while allowing for flexible control over data plane deployment and management. A few specific examples include:

- - The central operational teams want to relax control over pre-production environments. For example, if there are dozens of pre-production environments spread across multiple development teams. Management of pre-prod environments can be delegated to development teams, freeing them of central control for experimentation and testing.

- - Central API management is required but, for performance reasons, data planes can't be shared. In high traffic environments, the noisy neighbor problem for shared data planes may not be an acceptable tradeoff. In this model, the data plane is single tenant while the control plane is multi-tenant.

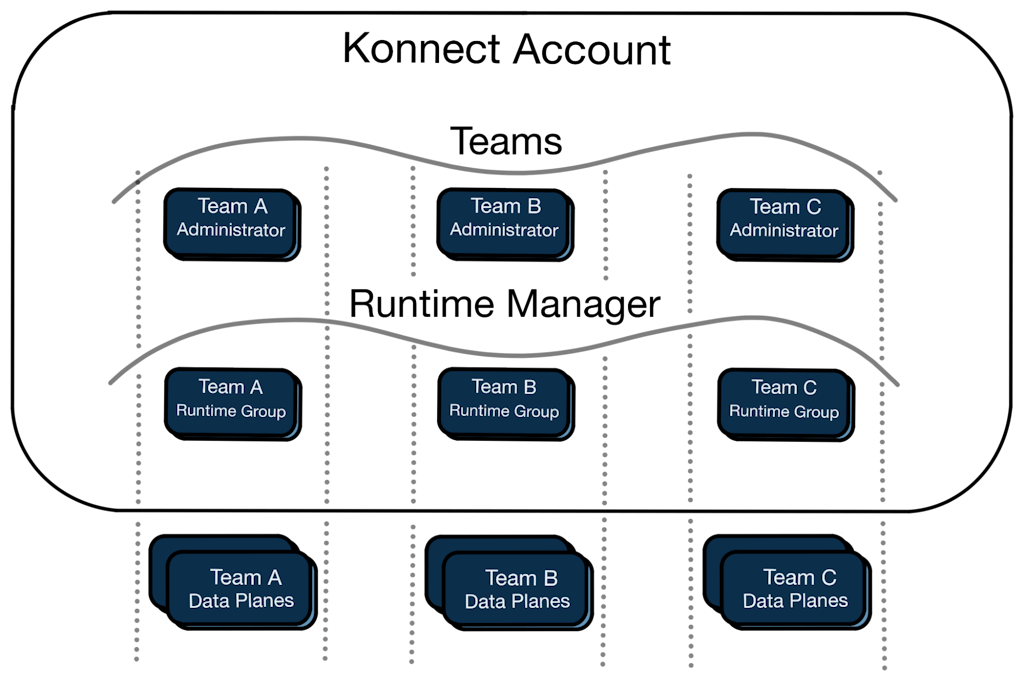

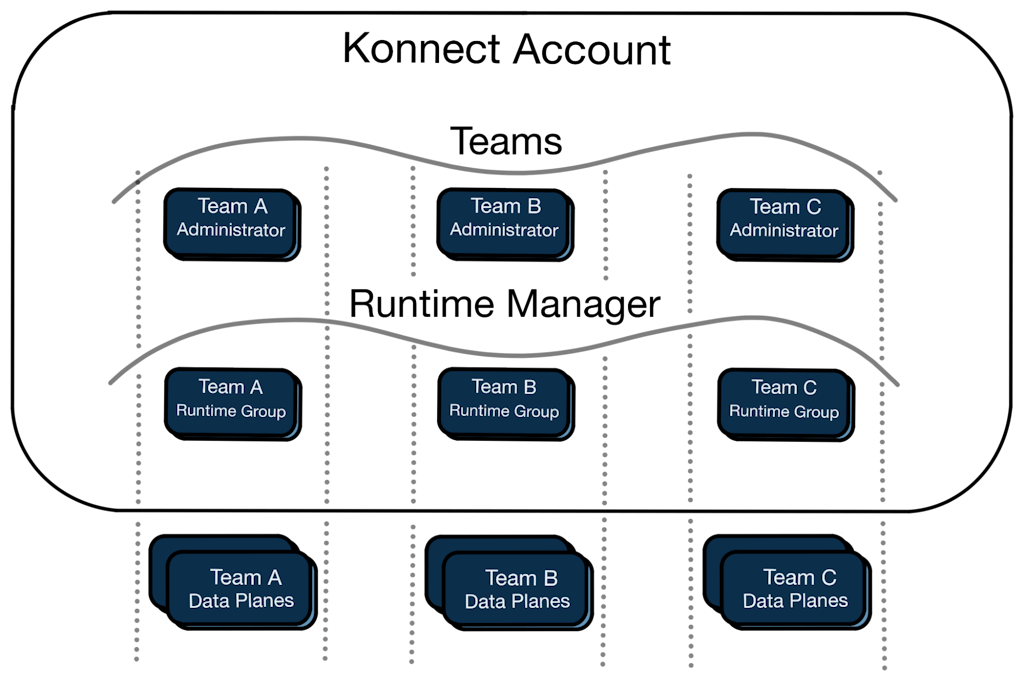

Kong Konnect allows you to define a management hierarchy using [Roles and Teams](https://docs.konghq.com/konnect/org-management/teams-and-roles/)Roles and Teams. Roles define access to platform resources and behaviors, and Teams allow you to assign roles to groups of users.

Using Runtime Manager, Teams, and Roles together, you can choose a control plane topology that meets your specific needs. In practice, this solution gives you the best of single and multi-tenancy control planes together. The main Kong Konnect account acts as the central governance mechanism over tenants (Teams), and their control planes (Runtime Groups). The Runtime Groups can be managed independent of the central account by the team managers using standard Kong tools.

This provides a single tenant control plane solution from the perspective of the team administrators (the silos in the diagram above), and a multi-tenant solution from the main Kong Konnect account perspective (using teams and runtime groups).

The team-based administrators can manage their isolated data plane configurations using the same tools Kong Enterprise users are used to: decK, the Admin API, and the Kong Konnect web console. Finally, self-managed data planes connect to specific Runtime Groups providing a fully isolated single-tenant data plane solution for your tenants. The [Kong Konnect documentation](https://docs.konghq.com/konnect/)Kong Konnect documentation provides more details.

The Kong engineering team is continuously working on advancements to the Kong Konnect platform. Current plans include adding a feature that allows for the composition of runtime groups into collections that may share configuration aspects. In addition, fully hosted data planes are a future milestone within Kong Konnect, which will allow users to get up and running with Kong without having to deploy or manage any data plane servers or software.

### Kong Enterprise Federated Model

Kong Konnect provides an elegant **multi-tenant CP / single-tenant DP** solution. In certain circumstances, the Kong Konnect platform isn't a viable option (e.g., air-gapped installations). If you're constrained to on-prem Kong Enterprise *and* wish to centralize a control plane *while* allowing tenants to have independent data planes, a [***federated*** deployment model](https://konghq.com/blog/federated-api-management)***federated*** deployment model is an option. Similar to the Kong Konnect model, the Kong Enterprise federated model may allow for relaxed control over data plane deployment while maintaining a centralized governance over configuration.

**⚠️ Warning: ***This strategy isn't generally recommended because of the operational tradeoffs required to successfully implement it. The Kong Konnect strategy, provided above, is a superior solution. The complexities below should be fully understood before implementing this deployment model.*

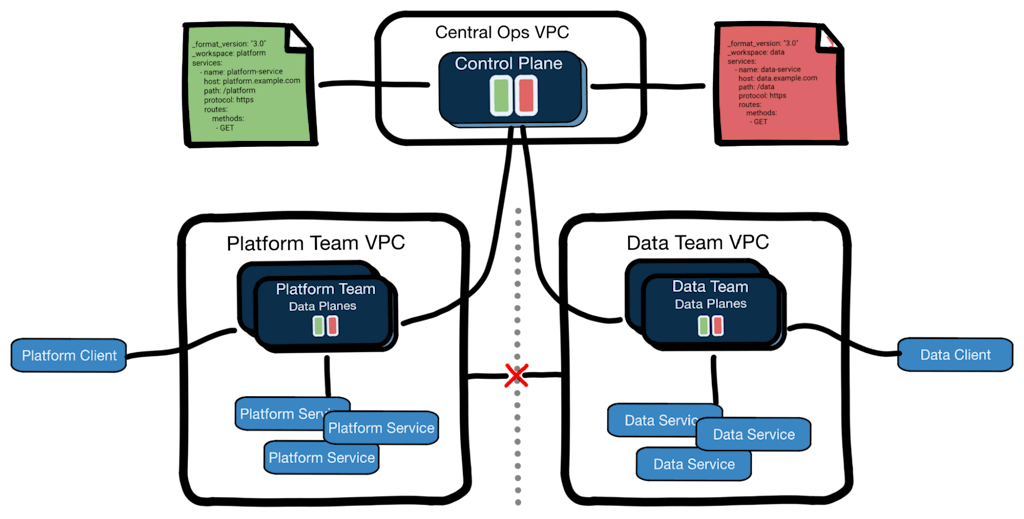

A federated model requires a combination of Kong Gateway enterprise features, network segregation, *and* an understanding of trade-offs related to configuration isolation and operational complexities.

Importantly, the federated deployment model of Kong Gateway requires an understanding of some key tradeoffs and specific deployment considerations.

As mentioned earlier, configurations are pushed to every connected data plane. Users with full access to data plane nodes will have access to the full shared configuration. With the federated model, this may not be desirable as the configuration could contain details that can't be shared across tenants. Some of this risk can be mitigated with secret references, discussed later in this article.

The federated model also requires coordination between the distributed teams and management over their data planes. All data planes connected to a shared control plane must maintain parity when it comes to version, configuration, and installed plugins. This may require the distributed teams to undertake maintenance tasks that aren't relevant to their usage of the gateway.

Custom plugins may be particularly challenging to manage in federated models. Because the full configuration is loaded across all data planes, when a custom plugin is used, it must be distributed to all data planes regardless of the specific tenant's usage of it. When a data plane encounters a configuration for a plugin that isn't installed, it treats it as a failure condition. Thus, teams will need to maintain parity in the installed gateway image so all configurations can be loaded across all tenant's data planes.

Overall, central teams will likely need to maintain a "golden image" of the data plane that the distributed teams deploy into their self-managed environments to achieve the required parity. The Kong Gateway documentation provides details on [building your own images](https://docs.konghq.com/gateway/latest/install/docker/build-custom-images/)building your own images.

Teams may choose to use the [pre|post function serverless plugin](https://docs.konghq.com/hub/kong-inc/serverless-functions/)pre|post function serverless plugin instead of custom plugins. The serverless plugin requires additional, carefully applied, granular configurations of the untrusted_lua_requires setting.

Let's look at *how* federated deployments are achieved.

**⚠️ Warning:***Control planes should never be shared across production and pre-production environments.*

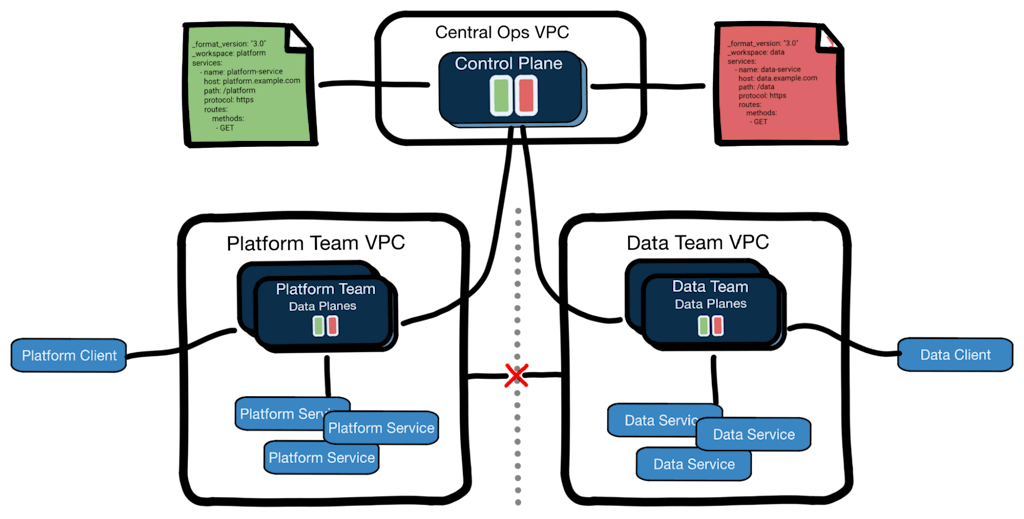

From the perspective of building and managing the Kong Gateway configuration, the federated model is the same as the Kong Enterprise workspaces model described above. The deployment starts with a shared configuration including workspaces, and RBAC is used by the centralized operations team to allow the administrators for each team to manage the configuration for their isolated workspace.

When the runtimes are deployed, they should be deployed into isolated network segments (for example, Virtual Private Clouds). This network isolation allows the data planes to be isolated from clients and services not related to the intended tenant.

One potential issue with this network isolation technique is in the usage of [Ring-Balancer Load Balancing](https://docs.konghq.com/gateway/3.1.x/how-kong-works/load-balancing/#ring-balancer)Ring-Balancer Load Balancing. When this is used (*in any tenant configuration*), Upstream Targets are verified with Health Checks. If Upstreams are heavily used in your shared configuration, data planes across the isolated networks will continuously fail to validate health checks for upstream targets unrelated to the intended tenant. These health check failures can cause resource misuse (failed network connections) and flooding of logging systems if not properly filtered.

The federated model may prove to be useful, but only in very specific circumstances where the trade-offs are well understood. The majority of customers will find the Kong Konnect Runtime Group model to be better aligned with their needs.

## Sensitive Data Protection

Regardless of which deployment strategy you choose, preventing the unintended exposure of secret data is critical. This is true even when the exposure isn't to the public but across business units or unauthorized individuals within teams.

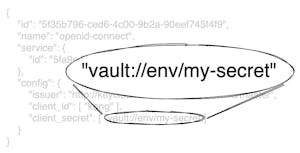

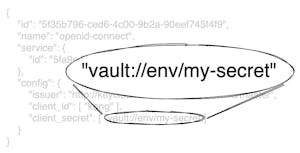

Secrets are integral to many aspects of using Kong gateways. *Both* Kong Enterprise and the Kong Konnect platform support [Secret References](https://docs.konghq.com/gateway/latest/kong-enterprise/secrets-management/)Secret References.

Secret References allow you to refer to secret values using a Uniform Resource Identifier (URI), which Kong Gateway will use to resolve a secret value at runtime. The URI itself isn't a secret, so it can be safely viewed in plaintext throughout the system.

Utilization of secret references is key as you build multi-tenant Kong deployments, and its usage is highly recommended whenever possible.

## Summary

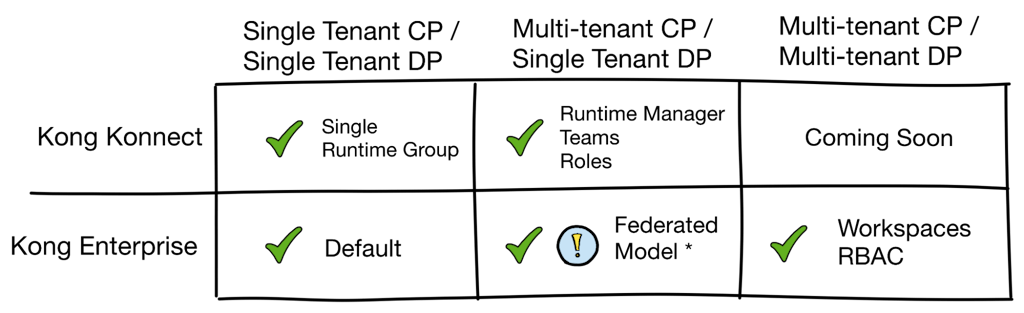

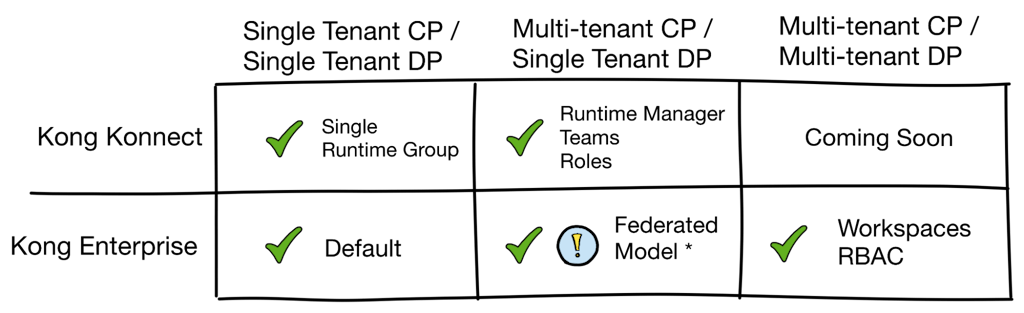

Kong Gateway provides very flexible deployment models, and choosing the proper strategy requires careful consideration. This matrix summarizes the support for various tenancy deployment strategies across Kong Enterprise and Kong Konnect.

* * The Kong Enterprise Federated model is listed as a Single Tenant DP solution, to compare it most closely with the Kong Konnect Runtime Manager model. However, the Federated Model is not a true Single Tenant DP solution. As described above, the DP configurations are shared among the runtime instances, but the model does allow for semi-independent tenant runtime deployments.*

The Kong Enterprise Federated Model described in this article should only be utilized in very specific cases where the tradeoffs are well known. Generally, customers will favor the Kong Konnect models, as they provide superior solutions and the benefits of SaaS-based management.

Balancing the tradeoffs between operational complexity, data isolation, and resource cost optimizations is important to the success of your project. Work with your Kong support team to help understand these tradeoffs, and make the best decision for your organization on how to optimally use Kong Gateway.

## Get started today with Kong Konnect

Kong Konnect offers a free tier specifically to support users who are looking for an efficient way to run Kong Gateway. Start using [Kong Konnect for free](https://konghq.com/products/kong-konnect/register)Kong Konnect for free today. It's the fastest and easiest way to deploy, secure, and manage your APIs.