# Govern all AI traffic. Do it with one gateway.

Govern LLM, MCP, and agent-to-agent (A2A) traffic with the same Kong AI Gateway.

## WITHOUT KONG

## Fragmented API & AI traffic

## WITH KONG

## APIs. LLMs. Agents. One platform for API and AI traffic.

## Build agents faster. Optimize token spend. Mitigate AI risks.

Govern how developers, apps, and agents consume LLMs. Control everything from access, to data leakage, to token usage.

Generate MCP servers and govern how agents discover and consume them. Optimize context and implement critical auth solutions for production-ready MCP.

Roll out an agent gateway to fully govern *all *multi-agent traffic, with auth, observability, and auditability baked-in.

Don’t settle for fragmented AI gateway solutions, and don’t settle for solutions that aren’t hardened for enterprise usage. Unify all AI traffic in one secure, resilient platform.

## Watch Kong AI Gateway in action

## The agentic era demands a new kind of agentic infrastructure

Govern all generative AI and agentic AI traffic with a single gateway for LLM, MCP, and A2A connectivity.

## 01/ Control, manage, and secure gen AI traffic

## Enforce advanced LLM policies

- - Stop data leakage and avoid compliance nightmares with PII sanitization.

- - Make LLM traffic more efficient with semantic caching, routing, and load balancing.

- - Protect resources and ensure compliance with semantic prompt guards, access control, and more.

## 02/ MCP-ready. Prod-ready.

## Make MCP-powered agents a production reality

- - Automatically generate intelligent MCP tools and servers on top of Kong-managed APIs.

- - Enforce auth for MCP server access control.

- - Optimize token spend through MCP context optimization.

## 03/ Multi-agent ready

## Govern complex, multi-agent systems

- - Observe all A2A traffic and capture A2A-specific metrics.

- - Capture detailed telemetry on every A2A call, including payloads, latency, token usage, and errors.

- - Enforce centralized AuthN/Z for all A2A traffic.

- - Audit and track every A2A RPC call with information around caller identity, capabilities invoked, and more.

## 04/ Govern the rest of the AI data path, too

## Because AI traffic is more than just AI-native traffic

- - Govern how agents consume context from your API and event data estate.

- - Observe and govern the application, intelligence, and context layers – all from one platform.

- - Deliver enterprise data, events, APIs and agent-ready context.

## 05/ AI quota management

## Advanced LLM and token quota management

- - Set up user, model, and time-bound quotas around LLM consumption and token spend.

- - Enforce quotes at the gateway level.

- - Build showback and chargeback for LLM, agent, and MCP usage across the enterprise.

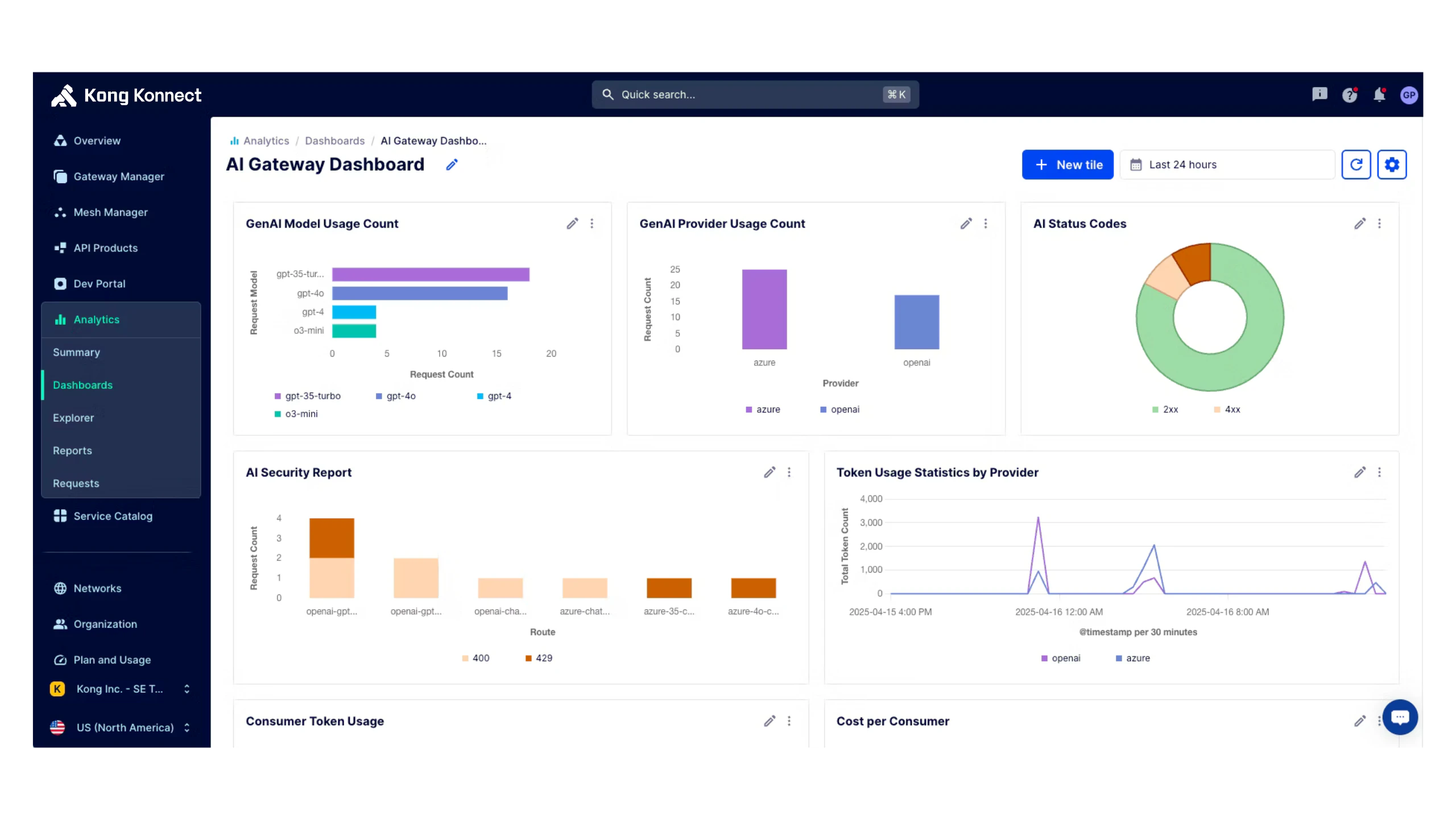

## 06/ AI metrics and observability

## L7 observability on AI traffic for cost monitoring and tuning

- - Track AI consumption, tool usage, token spend, and more.

- - Optimize AI usage and cost with predictive consumption models.

- - Debug AI exposure via logging, tracing, and more.

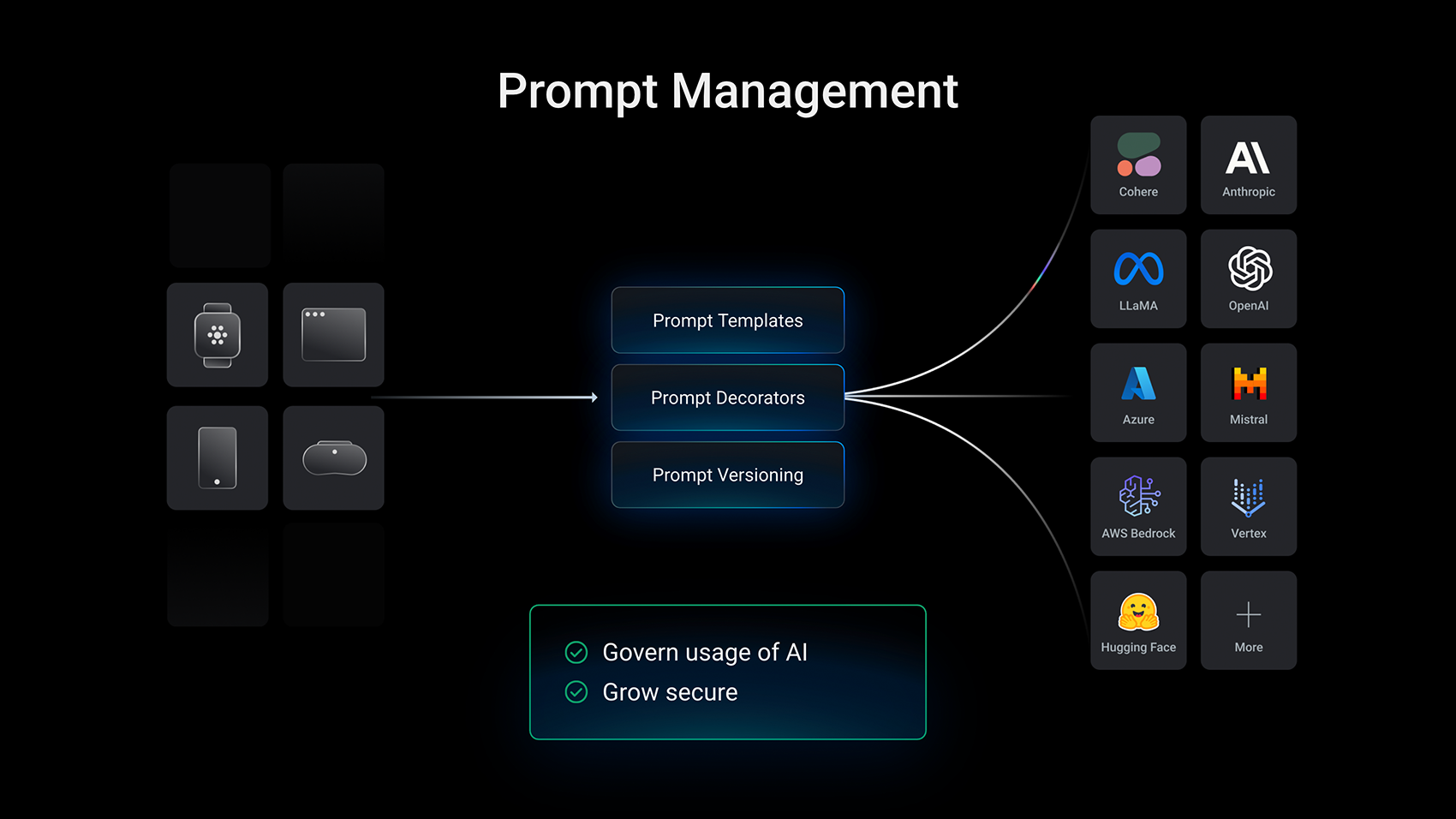

## 07/ Multi-LLM support

## Ensure every LLM use case is covered

- - Use Kong’s unified API interface to work with multiple different AI providers at the flip of a switch.

- - Seamlessly switch between AI providers to unlock new use cases and ensure high availability in the event of downtime.