# Deploy AI at Enterprise Scale

The AI infrastructure platform Fortune 500 companies trust to secure, govern, and optimize every LLM interaction.

## Why Enterprise AI Leaders Choose Kong

Enforce consistent security policies across OpenAI, Azure AI, AWS Bedrock, and GCP Vertex from a single control plane. Eliminate shadow AI with centralized visibility and compliance controls that satisfy your security and procurement teams.

Track token consumption, cost allocation, and usage patterns across every team and project. Pre-built executive dashboards provide the data your CFO needs to approve AI investments and measure ROI.

Semantic caching eliminates redundant LLM calls. Intelligent routing directs each request to the most cost-effective model. Enterprise customers report 40-60% reduction in token spend within the first 90 days.

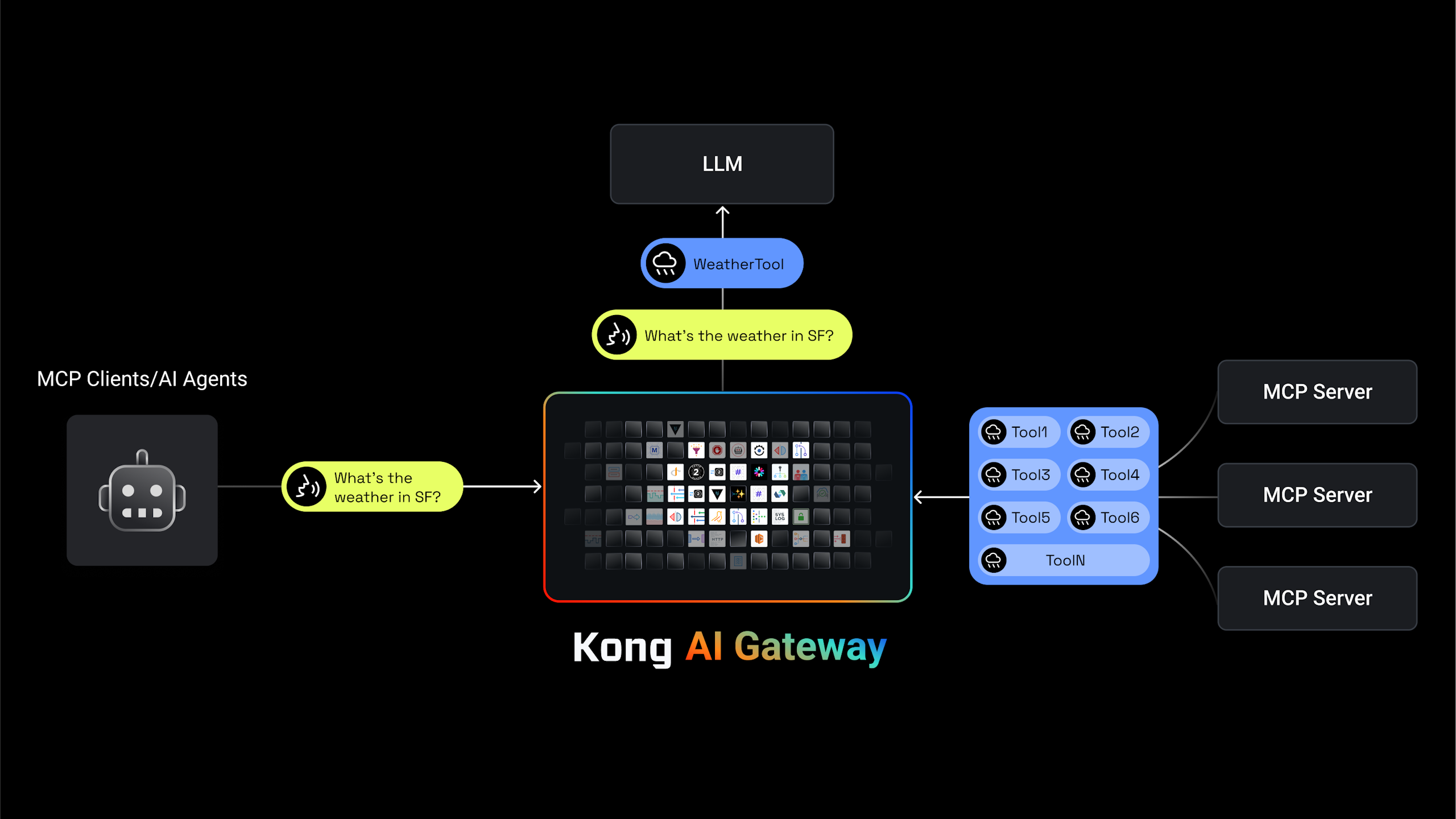

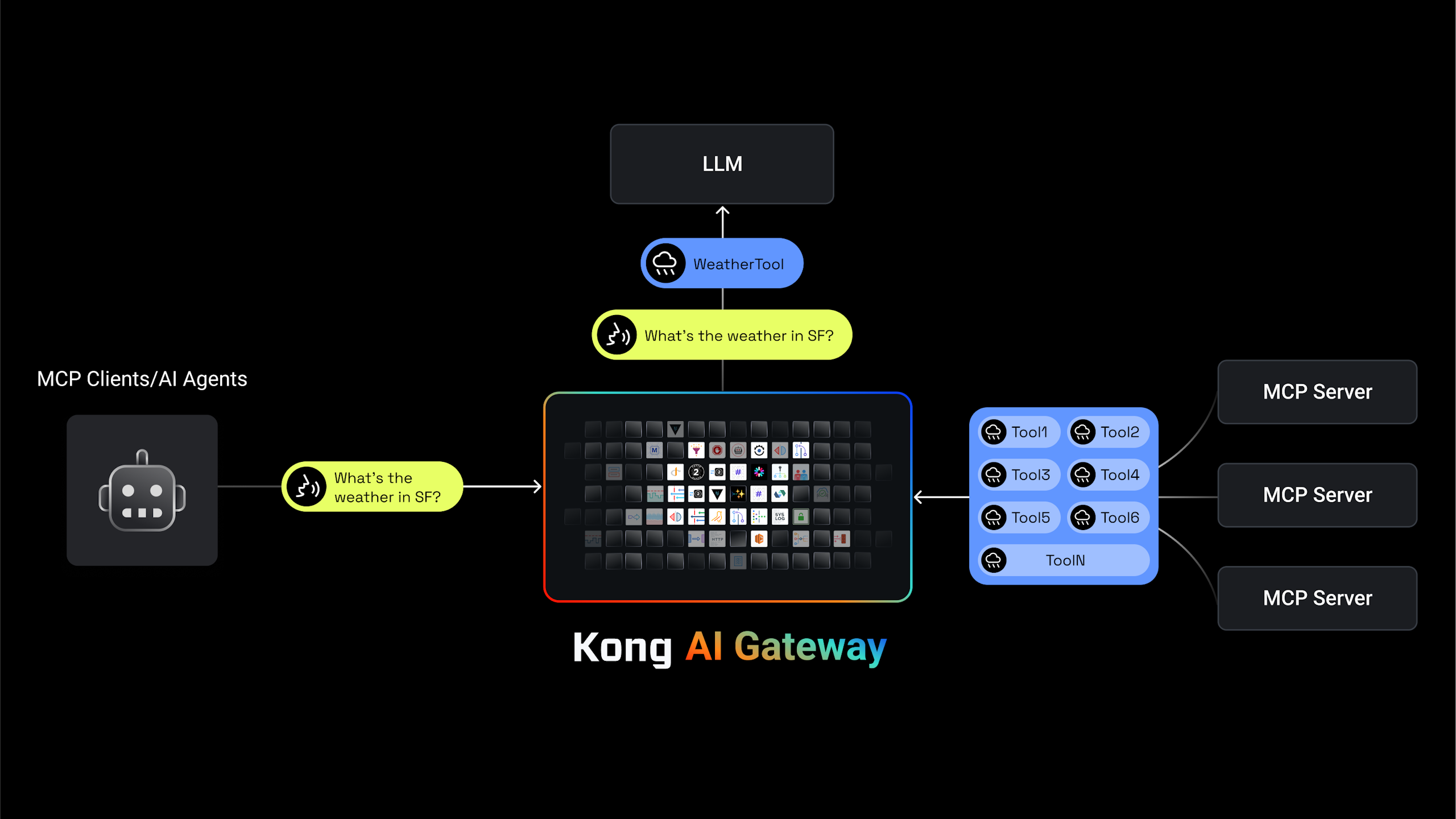

Automatically generate enterprise-grade MCP servers with built-in authentication, observability, and policy enforcement. Deploy AI agent infrastructure that meets your security requirements from day one.

## Enterprise-Grade AI Infrastructure, Built for Scale

Govern the entire AI lifecycle with Kong Konnect LLM and MCP infrastructure.

## Automated Compliance & Policy Enforcement

Enforce organizational AI policies consistently across every team and application. Built-in PII detection across 20+ data categories and 12 languages ensures compliance with GDPR, CCPA, and industry-specific regulations—automatically.

## MCP Infrastructure That Meets Enterprise Requirements

Centralized authentication and access control for all MCP servers across your organization

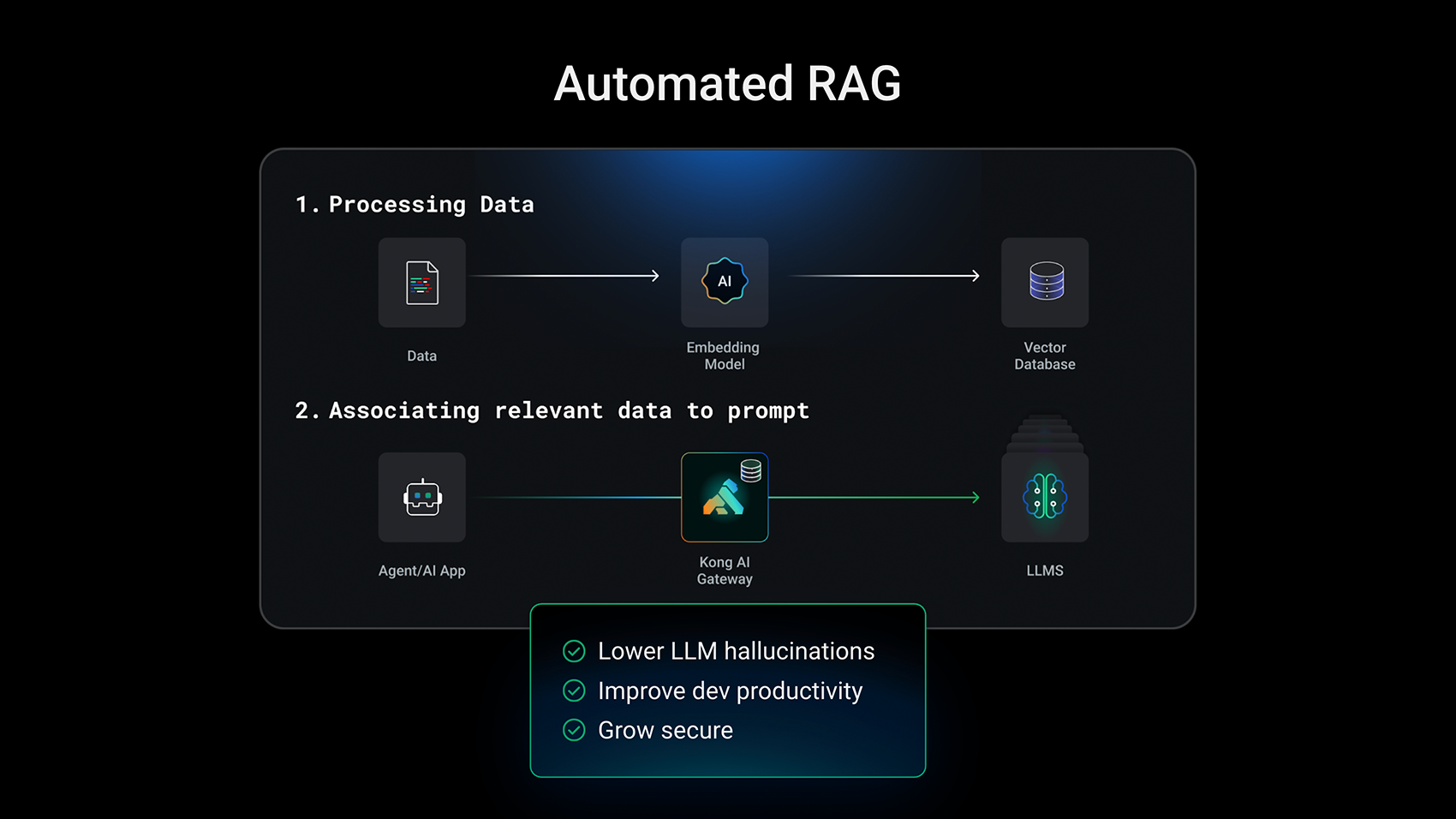

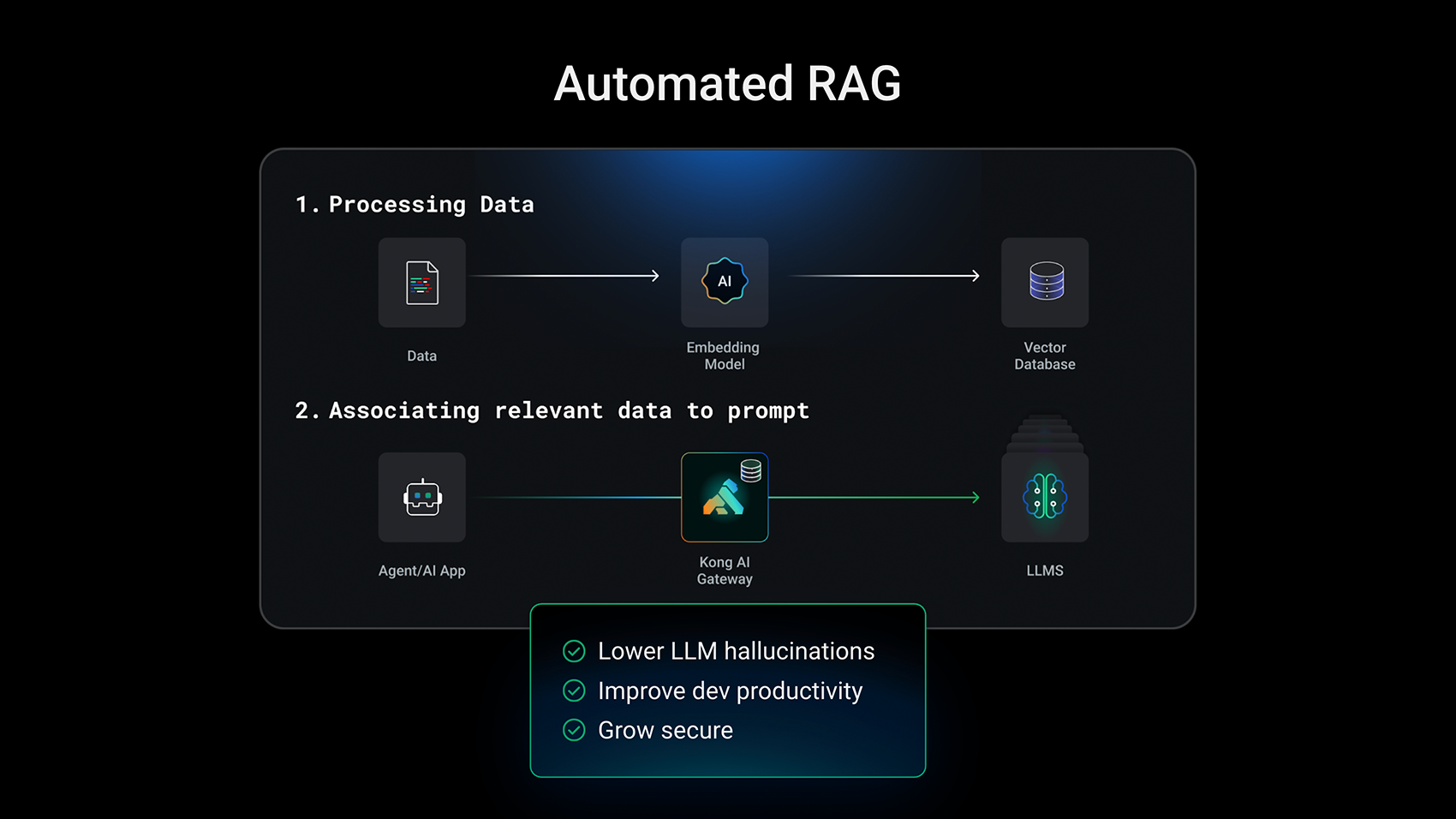

## Reduce Hallucinations with Governed RAG at Scale

- - Deploy RAG infrastructure at the gateway layer—no application changes required

- - Enforce consistent context injection across all AI applications to improve response accuracy

- - Maintain centralized control over knowledge sources with enterprise access controls and audit logging

## Executive Dashboards for AI Cost and Performance

- - Track AI spend by team, project, and application with real-time cost attribution

- - Forecast token consumption and budget requirements with predictive analytics

- - Integrate with existing observability tools via OpenTelemetry for unified monitoring

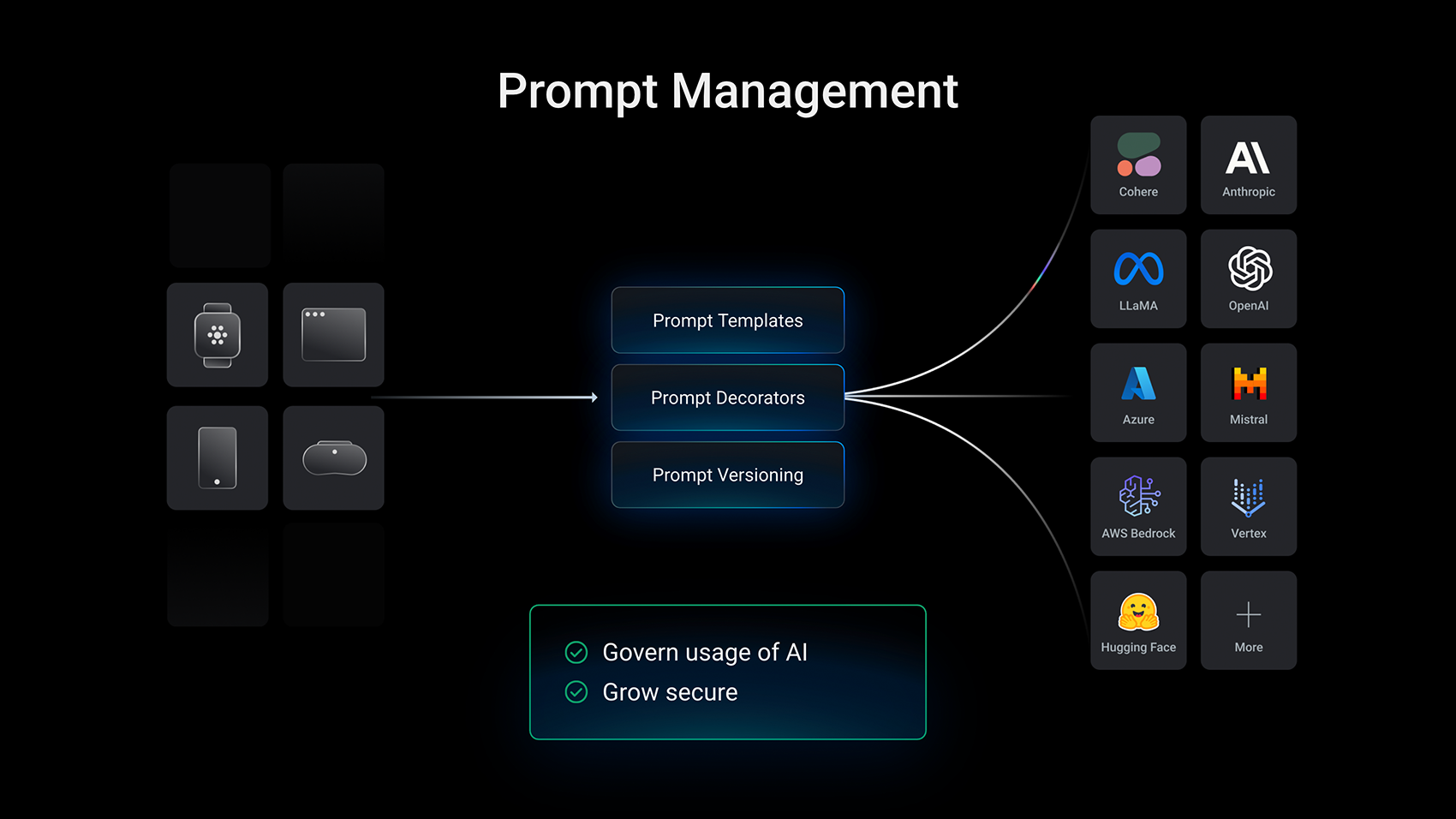

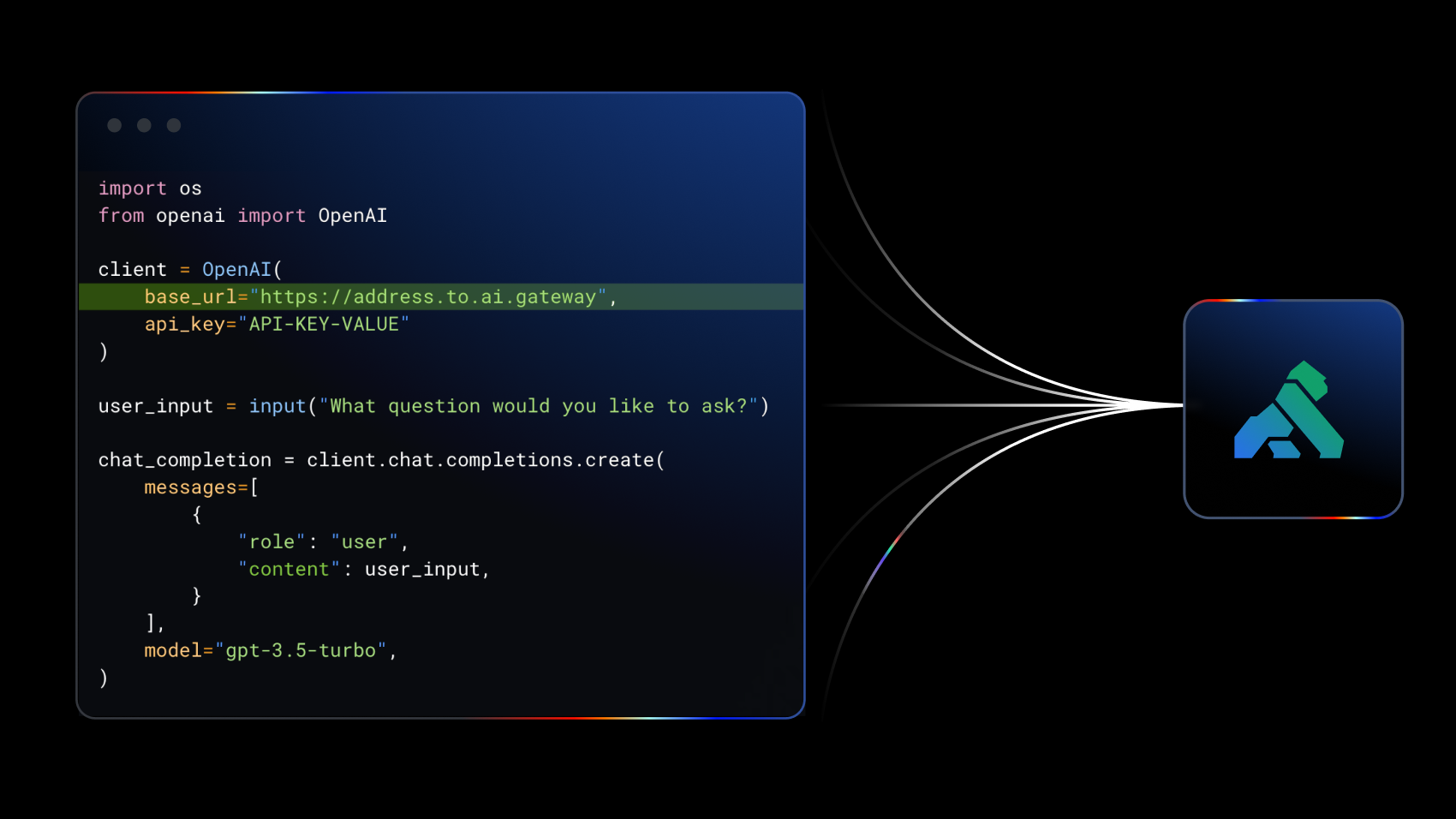

## Vendor Flexibility Without Lock-In

- - Maintain a single integration point while accessing OpenAI, Azure, AWS Bedrock, and Google Vertex AI

- - Automatic failover between providers ensures 99.99% availability for business-critical AI applications

- - Switch providers or models without application changes—preserve your negotiating leverage

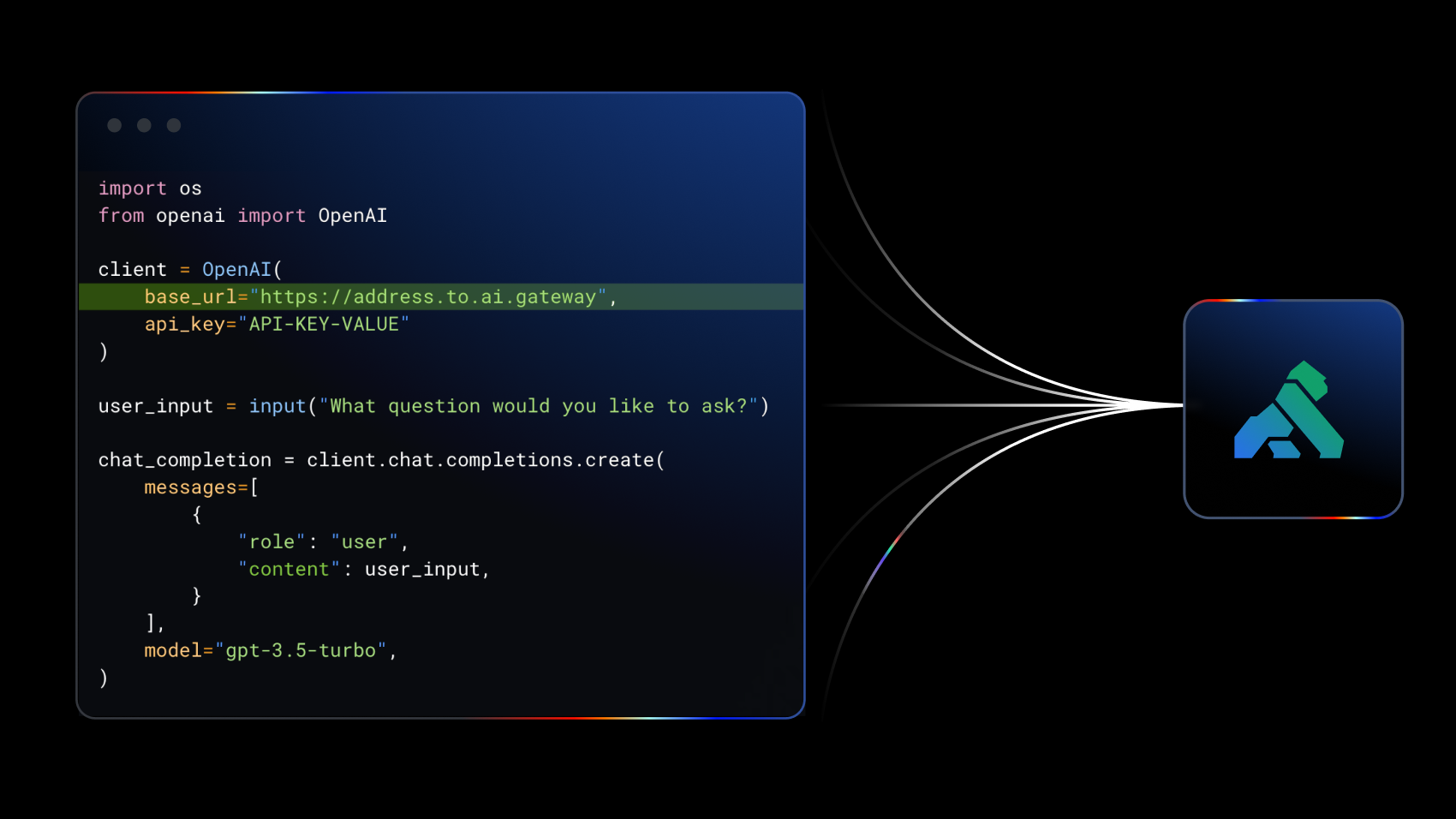

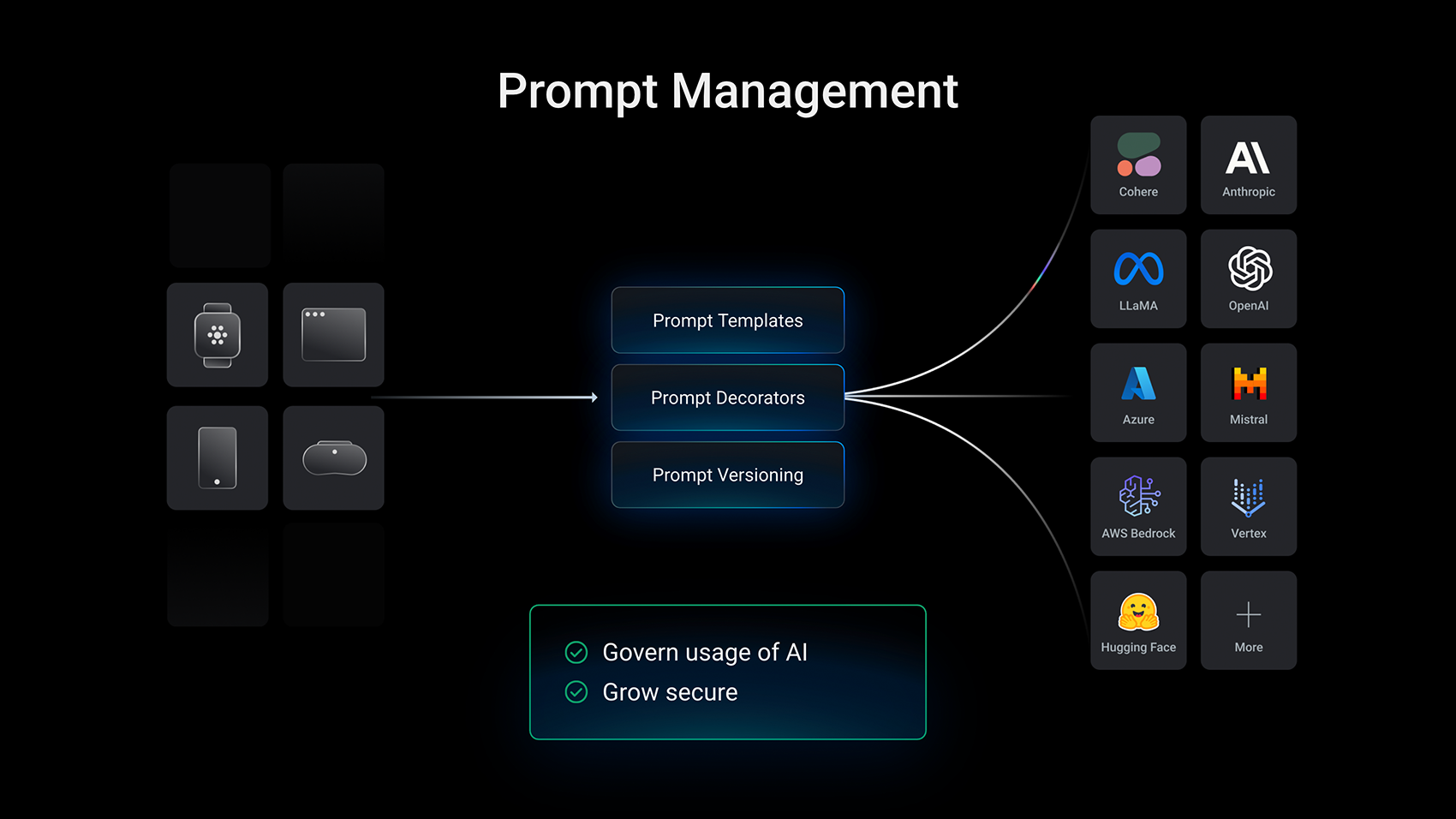

## Deploy AI Capabilities Without Development Cycles

- - Enable AI across your organization without competing for engineering resources

- - Pre-built integrations let business teams launch AI initiatives in days, not quarters

- - IT maintains governance and security while business teams move fast

## Your AI Strategy Runs on Your API Strategy

Every AI agent, every LLM call, every MCP interaction flows through APIs. Kong's unified platform gives you the control, visibility, and governance to scale AI confidently—built on the same infrastructure trusted by the world's largest enterprises for API management.

## Ready to Scale AI Securely?

See why Goldman Sachs, NASDAQ, and United Airlines trust Kong for their AI infrastructure. Talk to our enterprise team about your AI initiatives.