# The API and AI platform for platform builders

Unify and manage every point of innovation – critical connectivity in the Kong Konnect unified API and AI platform.

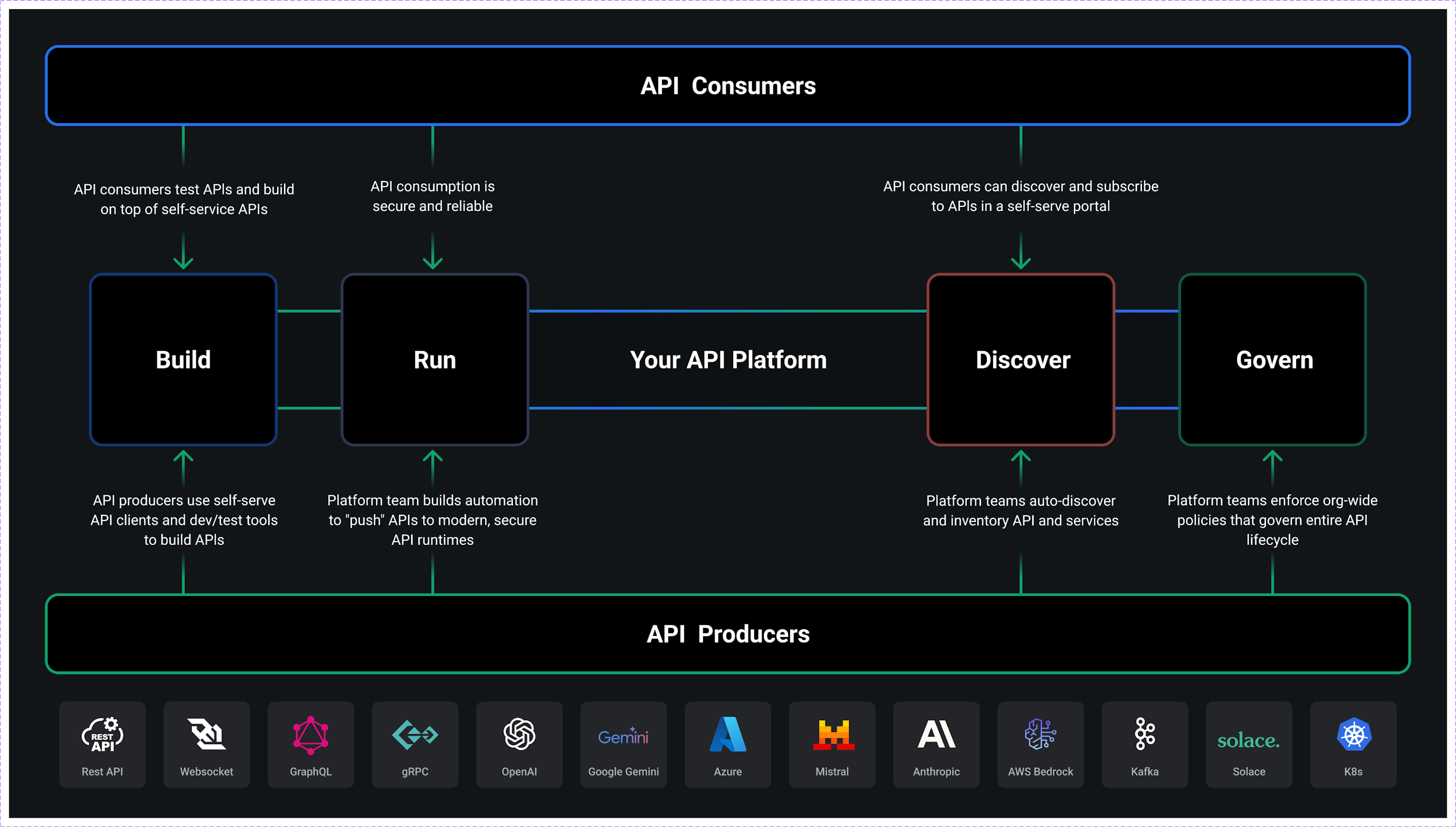

## Build, run, discover, govern, and monetize all AI traffic – in one platform

Set up self-service, secure paved roads for provisioning API and AI infrastructure for producers and self-service API and AI products for consumers.

Register and publish agent context as self-serve products in the Konnect Developer Portal and MCP registry.

Define entitlements, meter, and bill against agent context consumption for everything from the agent to the LLM to the MCP server to MCP tools.

Design automated guardrails to enforce security, observability, and reliability best practices across every instance where an agent consumes your LLMs, MCP servers, APIs, and event streams.

## Why Kong Konnect?

Only one platform gives you a unified workspace for managing all API and AI traffic.

## 01/ Build your context mesh

## Connect agents with enterprise context

- - Auto-discover APIs and compose endpoints into MCP tools

- - Auto-generate MCP servers that leverage composed MCP tools. One-click deploy to Kong AI Gateway infrastructure.

- - Design and orchestrate agentic integrations with LLMs, MCP servers, APIs, and real-time data.

## 02/ Multi-runtime, multi-protocol

## Federated gateway infrastructure for the agentic era (and other technology, too)

- - Provision and govern gateways for runtime infrastructure for API, AI, agent, event streaming, and microservices use cases.

- - Agents can leverage the Konnect MCP server to start helping you on your agentic API platform journey.

- - Set up and enforce automated guardrails that ensure consistency and compliance.

- - Set up control plane groups for isolated access and management of runtime infrastructure for specific environments, lines of business, product lines, etc.

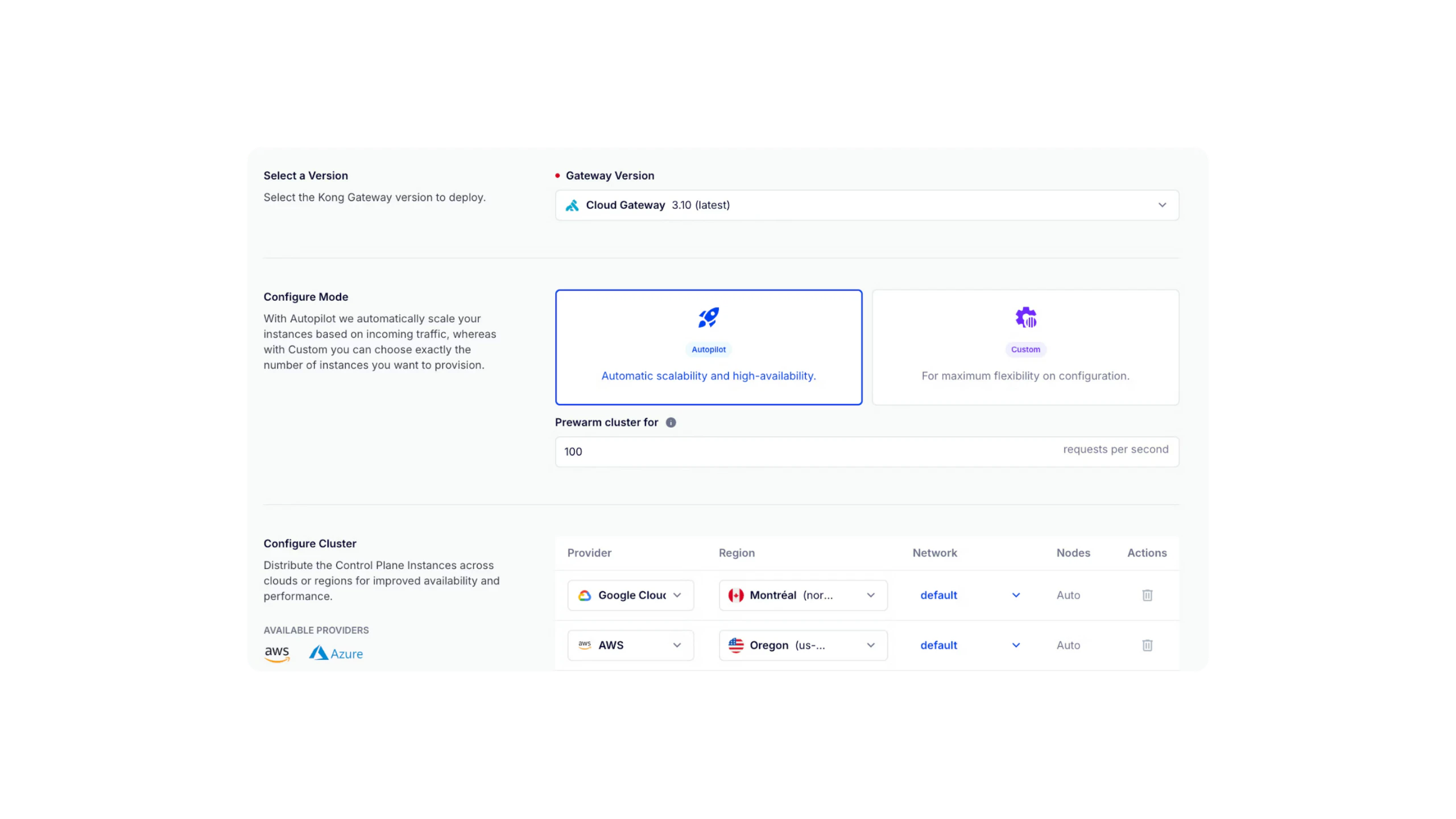

## 03/ Flexible deployment

## Deploy and host gateway infrastructure wherever agents run

- - Keep all connectivity data locked down in your own environment with support for hybrid deployments.

- - Make the move to multi-cloud with dedicated, Kong-managed infrastructure in your cloud provider and region of choice.

- - Enforce encryption and PII sanitization so that no cloud environment ever sees sensitive data during API and agentic transactions.

## 04/ Multi-cloud, vendor-managed

## Make the move to multi-cloud, without hosting anything yourself

- - Become a multi-cloud organization without having to acquire multiple cloud provider AI and API gateway solutions.

- - Use Kong-managed AI and API gateways, but choose the cloud provider and region where you want us to host them.

- - Every gateway is set up in cloud infrastructure dedicated to your Konnect organization.

- - Let the Konnect smart DNS automatically choose the best region to use for each API request based on real-time performance and latency affinity.

- - Offload scaling to us so you can focus on building killer API products and services.

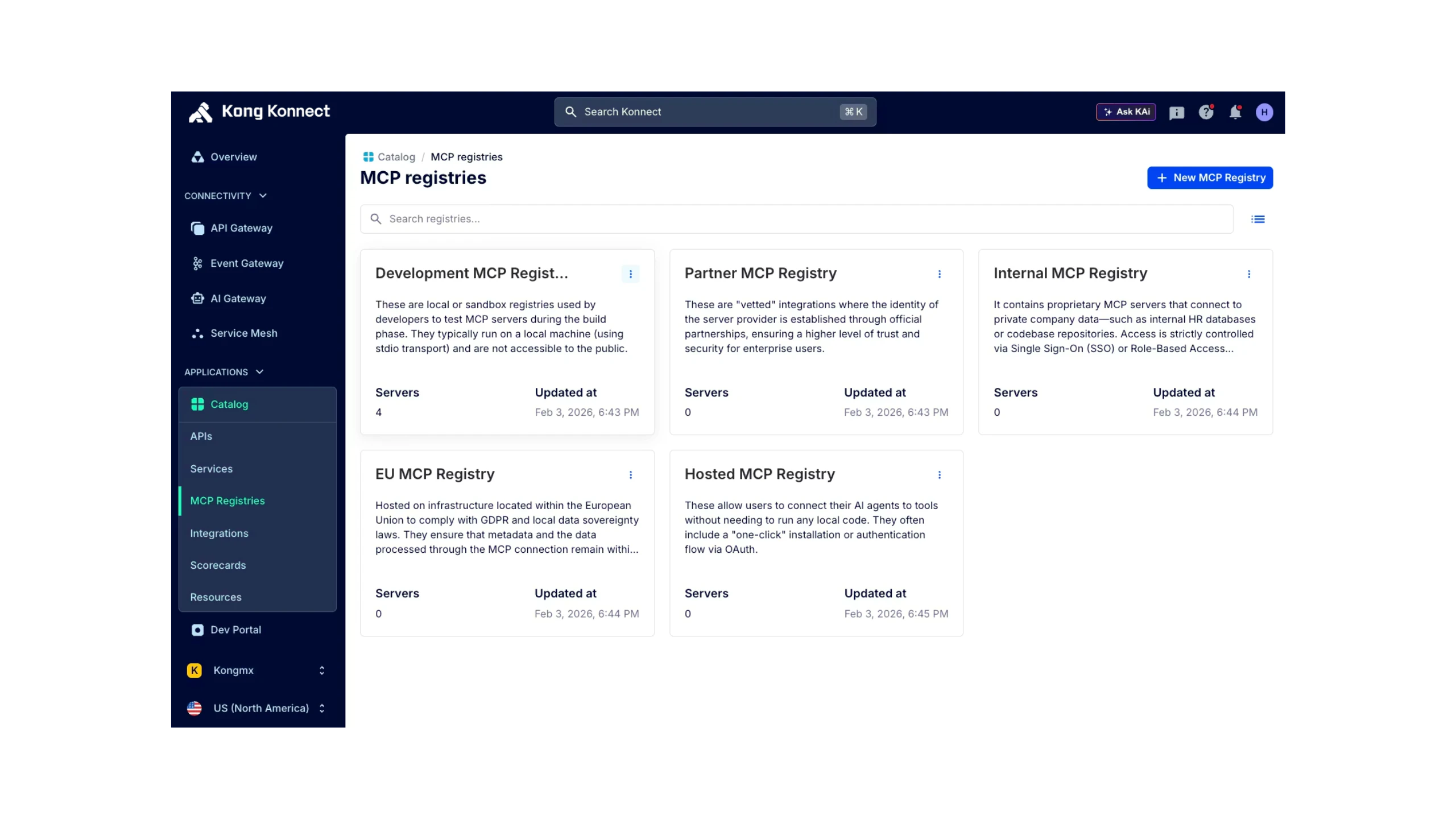

## 05/ Onboard developers and agents

## Build a self-service connectivity registry for agentic consumption and productivity

- - Build a great agent experience by opening up secure, self-service access to all of the data and connectivity that AI agents will need to do their jobs well.

- - For agents, build a context-rich MCP registry.

- - For external agents, publish APIs and services in the Konnect Developer Portal.

- - Begin to plan out your monetization strategy for agentic API and service consumption.

## 06/ Discover and govern every MCP server and tool

## Register MCP servers for enterprise governance. Publish them for agentic consumption

- - Publish MCP servers in a centralized registry so that agents can discover available servers, their endpoints, and capabilities instead of relying on hardcoded configurations

- - Centralize visibility into tool usage, health, and failures across MCP servers, making it easier to monitor behavior and diagnose issues

- - Set up rules around only publishing and consuming approved MCP servers

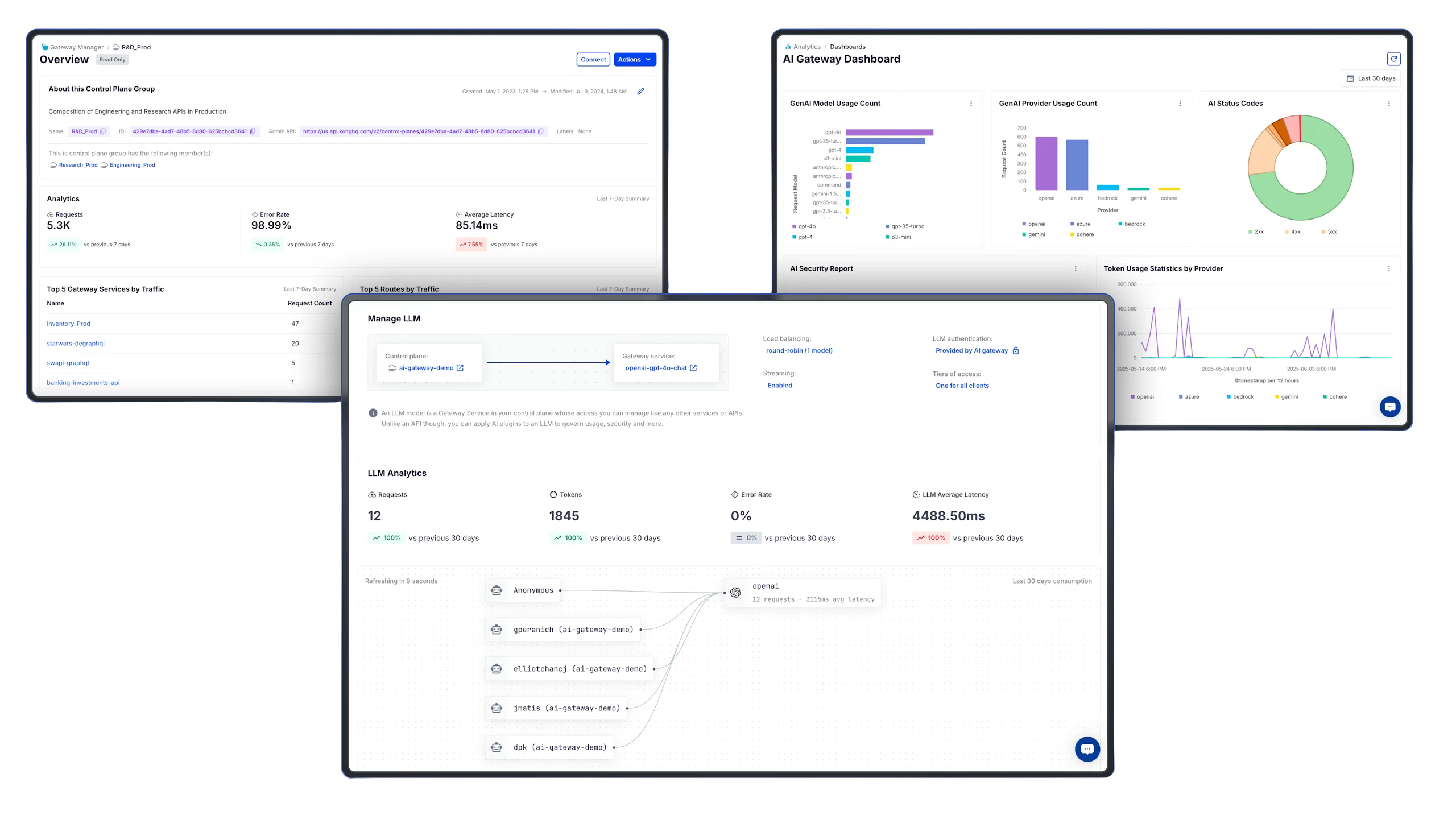

## 07/ Unified observability

## Set up unified observability for API, AI, event streaming, and microservices traffic

- - Execs and platform owners leverage platform-wide insights around overall API and data product consumption, LLM costs, and more.

- - SREs and disaster recovery teams have what they need to understand performance and reliability metrics.

- - AI teams can see agentic consumption of LLMs, token consumption per model, overall LLM performance, and more.

- - Developers can run Active Tracing sessions for deep insight into every API transaction, policy execution, and upstream service behavior.

- - API product managers can track monetized API product uptake, see which APIs and services customers are leveraging, and make better-informed API product decisions.

## 08/ Automate best practices

## Enforce automated guardrails across your entire API and connectivity stack

- - Automate and enforce security posture management for APIs, AI, events, and microservices.

- - Automate with our Admin API, declarative decK CLI tool, Kubernetes operator, or Terraform provider. Nobody else offers the breadth and depth of automation support.

- - Enforce guardrails across the *entire* lifecycle through design best practices, gateway policy enforcement, observability by default, and more.

## It’s all about the builders. Make their experience awesome.

Give every human and agentic builder what they need to self-serve produce and consume APIs and enterprise connectivity.