Today, we're excited to announce the general availability of AI Manager in Kong Konnect, the platform to manage all of your API, AI, and event connectivity across all modern digital applications and AI agents.

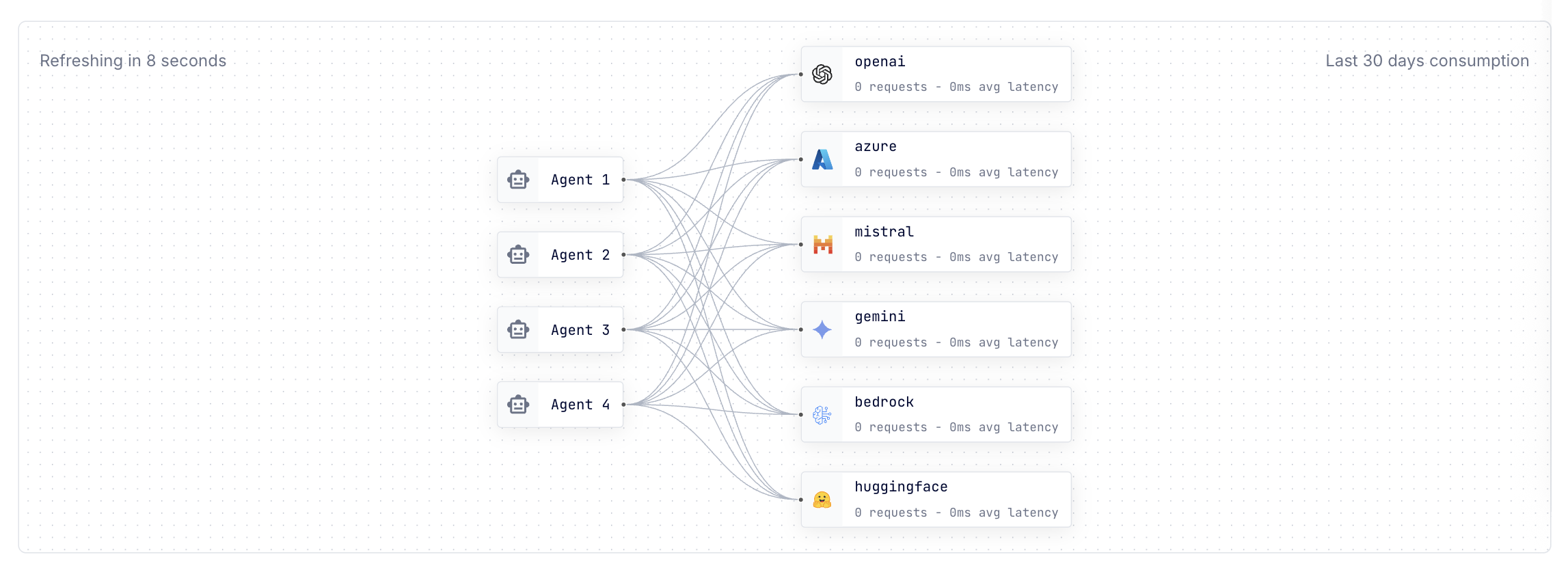

Kong already provides the fastest and most feature rich [AI Gateway](https://konghq.com/products/kong-ai-gateway)AI Gateway technology to manage your LLM traffic (including [MCP traffic](https://konghq.com/blog/product-releases/securing-observing-governing-mcp-servers-with-ai-gateway)MCP traffic), and with these new AI Manager capabilities in Konnect, you can now easily expose LLMs for consumption by your AI agents, and additionally govern, secure, and observe LLM traffic using a brand-new user interface straight from your browser. The new AI Manager capabilities are in addition to the existing API and declarative configuration that you can already use to govern all your LLM and MCP traffic across AI agents and applications via Kong’s AI Gateway technology.

See a video demonstration of the new AI Manager in action below: