Here's the pattern we see over and over:

- - Company invests heavily in AI capabilities

- - Engineering builds impressive demos and internal tools

- - Finance asks "what's the ROI?"

- - Silence

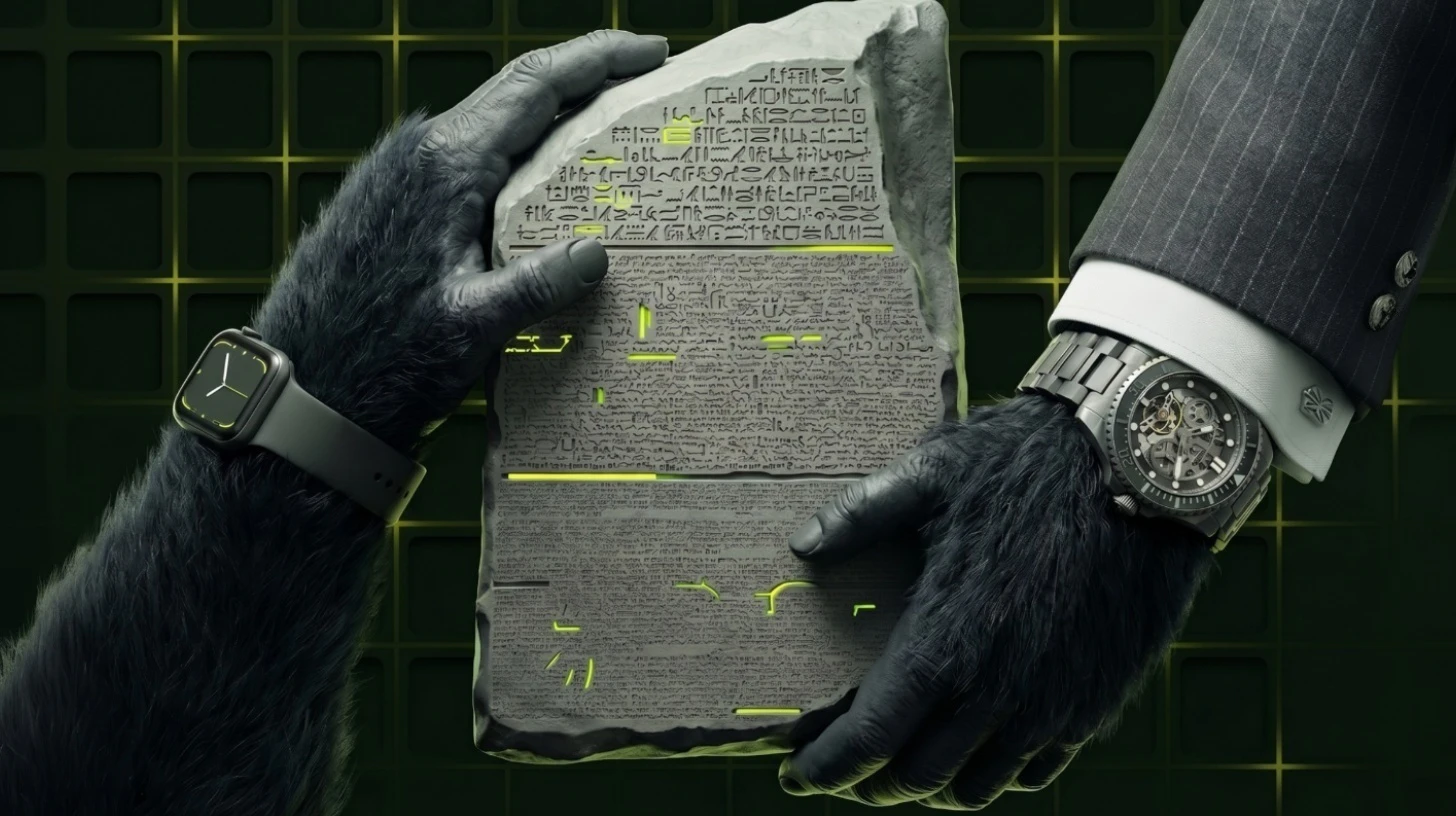

The issue isn't the AI itself — it's the infrastructure gap. Traditional API management was built for a world of predictable, flat-rate access. But AI workloads are different: token-based, usage-variable, model-dependent, and expensive. Without purpose-built metering and billing, you can't:

- - **Productize AI capabilities** for external customers or internal teams

- - **Real-time usage visibility** for customers, product and finance

- - **Enforce usage governance** before costs spiral out of control

- - **Price intelligently** based on actual consumption and value delivered

And standalone billing and monetization platforms? They can meter. They can bill. But they can't enforce. When a customer burns through their quota or an internal team blows past their budget, these platforms will tell you about it — after the damage is done. Real cost governance means enforcing usage limits at runtime, in real-time, in accordance with subscriptions and entitlements. That requires living at the connectivity layer, not sitting alongside it.

And here's what often gets missed: **your APIs are part of your AI strategy too**.

The backend services, data APIs, and integration endpoints that power your AI products all contribute to your cost structure — and your monetization opportunity. You can't have a complete AI business model if you're only metering and enforcing limits at the LLM layer while ignoring the APIs that feed it.